Journal Description

AI

AI

is an international, peer-reviewed, open access journal on artificial intelligence (AI), including broad aspects of cognition and reasoning, perception and planning, machine learning, intelligent robotics, and applications of AI, published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within ESCI (Web of Science), Scopus, EBSCO, and other databases.

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 20.8 days after submission; acceptance to publication is undertaken in 5.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: APC discount vouchers, optional signed peer review, and reviewer names published annually in the journal.

Latest Articles

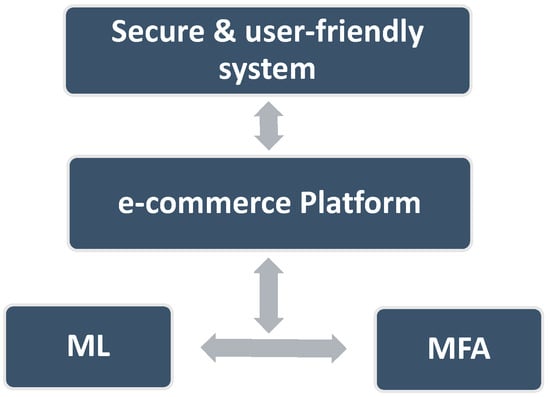

Secure Internet Financial Transactions: A Framework Integrating Multi-Factor Authentication and Machine Learning

AI 2024, 5(1), 177-194; https://doi.org/10.3390/ai5010010 - 10 Jan 2024

Abstract

►

Show Figures

Securing online financial transactions has become a critical concern in an era where financial services are becoming more and more digital. The transition to digital platforms for conducting daily transactions exposed customers to possible risks from cybercriminals. This study proposed a framework that

[...] Read more.

Securing online financial transactions has become a critical concern in an era where financial services are becoming more and more digital. The transition to digital platforms for conducting daily transactions exposed customers to possible risks from cybercriminals. This study proposed a framework that combines multi-factor authentication and machine learning to increase the safety of online financial transactions. Our methodology is based on using two layers of security. The first layer incorporates two factors to authenticate users. The second layer utilizes a machine learning component, which is triggered when the system detects a potential fraud. This machine learning layer employs facial recognition as a decisive authentication factor for further protection. To build the machine learning model, four supervised classifiers were tested: logistic regression, decision trees, random forest, and naive Bayes. The results showed that the accuracy of each classifier was 97.938%, 97.881%, 96.717%, and 92.354%, respectively. This study’s superiority is due to its methodology, which integrates machine learning as an embedded layer in a multi-factor authentication framework to address usability, efficacy, and the dynamic nature of various e-commerce platform features. With the evolving financial landscape, a continuous exploration of authentication factors and datasets to enhance and adapt security measures will be considered in future work.

Full article

Open AccessReview

AI and Face-Driven Orthodontics: A Scoping Review of Digital Advances in Diagnosis and Treatment Planning

by

, , , , , and

AI 2024, 5(1), 158-176; https://doi.org/10.3390/ai5010009 - 05 Jan 2024

Abstract

In the age of artificial intelligence (AI), technological progress is changing established workflows and enabling some basic routines to be updated. In dentistry, the patient’s face is a crucial part of treatment planning, although it has always been difficult to grasp in an

[...] Read more.

In the age of artificial intelligence (AI), technological progress is changing established workflows and enabling some basic routines to be updated. In dentistry, the patient’s face is a crucial part of treatment planning, although it has always been difficult to grasp in an analytical way. This review highlights the current digital advances that, thanks to AI tools, allow us to implement facial features beyond symmetry and proportionality and incorporate facial analysis into diagnosis and treatment planning in orthodontics. A Scopus literature search was conducted to identify the topics with the greatest research potential within digital orthodontics over the last five years. The most researched and cited topic was artificial intelligence and its applications in orthodontics. Apart from automated 2D or 3D cephalometric analysis, AI finds its application in facial analysis, decision-making algorithms as well as in the evaluation of treatment progress and retention. Together with AI, other digital advances are shaping the face of today’s orthodontics. Without any doubts, the era of “old” orthodontics is at its end, and modern, face-driven orthodontics is on the way to becoming a reality in modern orthodontic practices.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Healthcare: Current State and Future Perspectives)

►▼

Show Figures

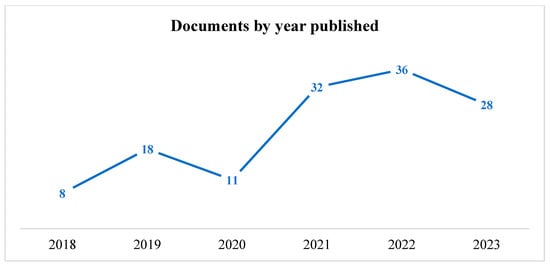

Figure 1

Open AccessArticle

Statistically Significant Differences in AI Support Levels for Project Management between SMEs and Large Enterprises

AI 2024, 5(1), 136-157; https://doi.org/10.3390/ai5010008 - 05 Jan 2024

Abstract

►▼

Show Figures

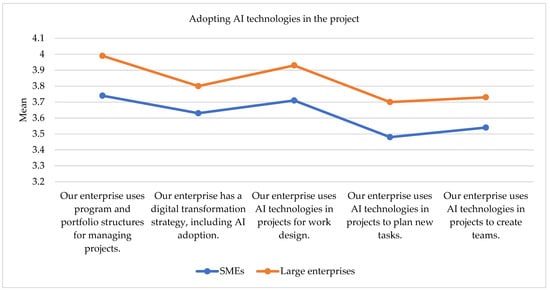

Background: This article delves into an in-depth analysis of the statistically significant differences in AI support levels for project management between SMEs and large enterprises. The research was conducted based on a comprehensive survey encompassing a sample of 473 SMEs and large Slovenian

[...] Read more.

Background: This article delves into an in-depth analysis of the statistically significant differences in AI support levels for project management between SMEs and large enterprises. The research was conducted based on a comprehensive survey encompassing a sample of 473 SMEs and large Slovenian enterprises. Methods: To validate the observed differences, statistical analysis, specifically the Mann–Whitney U test, was employed. Results: The results confirm the presence of statistically significant differences between SMEs and large enterprises across multiple dimensions of AI support in project management. Large enterprises exhibit on average a higher level of AI adoption across all five AI utilization dimensions. Specifically, large enterprises scored significantly higher (p < 0.05) in AI adopting strategies and in adopting AI technologies for project tasks and team creation. This study’s findings also underscored the significant differences (p < 0.05) between SMEs and large enterprises in their adoption and utilization of AI technologies for project management purposes. While large enterprises scored above 4 for several dimensions, with the highest average score assessed (mean value 4.46 on 1 to 5 scale) for the usage of predictive Analytics Tools to improve the work on the project, SMEs’ average levels, on the other hand, were all below 4. SMEs in particular may lag in incorporating AI into various project activities due to several factors such as resource constraints, limited access to AI expertise, or risk aversion. Conclusions: The results underscore the need for targeted strategies to enhance AI adoption in SMEs and leverage its benefits for successful project implementation and strengthen the company’s competitiveness.

Full article

Figure 1

Open AccessArticle

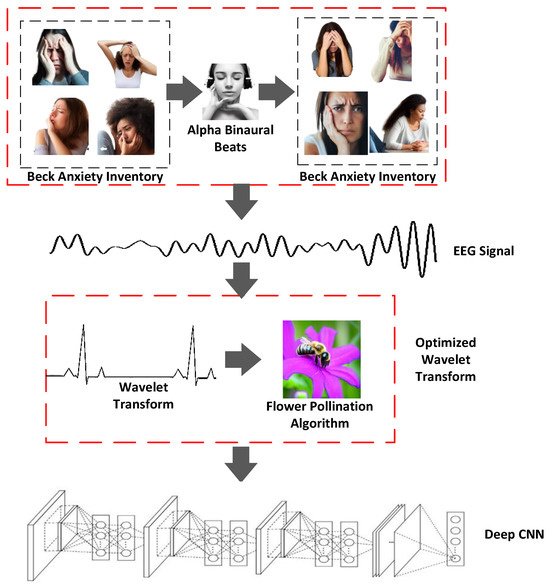

A Flower Pollination Algorithm-Optimized Wavelet Transform and Deep CNN for Analyzing Binaural Beats and Anxiety

AI 2024, 5(1), 115-135; https://doi.org/10.3390/ai5010007 - 29 Dec 2023

Abstract

Binaural beats are a low-frequency form of acoustic stimulation that may be heard between 200 and 900 Hz and can help reduce anxiety as well as alter other psychological situations and states by affecting mood and cognitive function. However, prior research has only

[...] Read more.

Binaural beats are a low-frequency form of acoustic stimulation that may be heard between 200 and 900 Hz and can help reduce anxiety as well as alter other psychological situations and states by affecting mood and cognitive function. However, prior research has only looked at the impact of binaural beats on state and trait anxiety using the STA-I scale; the level of anxiety has not yet been evaluated, and for the removal of artifacts the improper selection of wavelet parameters reduced the original signal energy. Hence, in this research, the level of anxiety when hearing binaural beats has been analyzed using a novel optimized wavelet transform in which optimized wavelet parameters are extracted from the EEG signal using the flower pollination algorithm, whereby artifacts are removed effectively from the EEG signal. Thus, EEG signals have five types of brainwaves in the existing models, which have not been analyzed optimally for brainwaves other than delta waves nor has the level of anxiety yet been analyzed using binaural beats. To overcome this, deep convolutional neural network (CNN)-based signal processing has been proposed. In this, deep features are extracted from optimized EEG signal parameters, which are precisely selected and adjusted to their most efficient values using the flower pollination algorithm, ensuring minimal signal energy reduction and artifact removal to maintain the integrity of the original EEG signal during analysis. These features provide the accurate classification of various levels of anxiety, which provides more accurate results for the effects of binaural beats on anxiety from brainwaves. Finally, the proposed model is implemented in the Python platform, and the obtained results demonstrate its efficacy. The proposed optimized wavelet transform using deep CNN-based signal processing outperforms existing techniques such as KNN, SVM, LDA, and Narrow-ANN, with a high accuracy of 0.99%, precision of 0.99%, recall of 0.99%, F1-score of 0.99%, specificity of 0.999%, and error rate of 0.01%. Thus, the optimized wavelet transform with a deep CNN can perform an effective decomposition of EEG data and extract deep features related to anxiety to analyze the effect of binaural beats on anxiety levels.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Healthcare: Current State and Future Perspectives)

►▼

Show Figures

Figure 1

Open AccessArticle

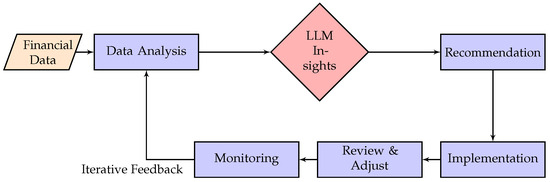

Optimized Financial Planning: Integrating Individual and Cooperative Budgeting Models with LLM Recommendations

AI 2024, 5(1), 91-114; https://doi.org/10.3390/ai5010006 - 25 Dec 2023

Abstract

In today’s complex economic environment, individuals and households alike grapple with the challenge of financial planning. This paper introduces novel methodologies for both individual and cooperative (household) financial budgeting. We firstly propose an optimization framework for individual budget allocation, aiming to maximize savings

[...] Read more.

In today’s complex economic environment, individuals and households alike grapple with the challenge of financial planning. This paper introduces novel methodologies for both individual and cooperative (household) financial budgeting. We firstly propose an optimization framework for individual budget allocation, aiming to maximize savings by efficiently distributing monthly income among various expense categories. We then extend this model to households, wherein the complexity of handling multiple incomes and shared expenses is addressed. The cooperative model prioritizes not only maximized savings but also the preferences and needs of each member, fostering a harmonious financial environment, whether they are short-term needs or long-term aspirations. A notable innovation in our approach is the integration of recommendations from a large language model (LLM). Given its vast training data and potent inferential capabilities, the LLM provides initial feasible solutions to our optimization problems, acting as a guiding beacon for individuals and households unfamiliar with the nuances of financial planning. Our preliminary results indicate that the LLM-recommended solutions result in budget plans that are both economically sound, meaning that they are consistent with established financial management principles and promote fiscal resilience and stability, and aligned with the financial goals and preferences of the concerned parties. This integration of AI-driven recommendations with econometric models, as an instantiation of an extended coevolutionary (EC) theory, paves the way for a new era in financial planning, making it more accessible and effective for a wider audience, as we propose an example of a new theory in economics where human behavior can be greatly influenced by AI agents.

Full article

(This article belongs to the Topic Artificial Intelligence Applications in Financial Technology)

►▼

Show Figures

Figure 1

Open AccessArticle

Application of YOLOv8 and Detectron2 for Bullet Hole Detection and Score Calculation from Shooting Cards

AI 2024, 5(1), 72-90; https://doi.org/10.3390/ai5010005 - 22 Dec 2023

Abstract

Scoring targets in shooting sports is a crucial and time-consuming task that relies on manually counting bullet holes. This paper introduces an automatic score detection model using object detection techniques. The study contributes to the field of computer vision by comparing the performance

[...] Read more.

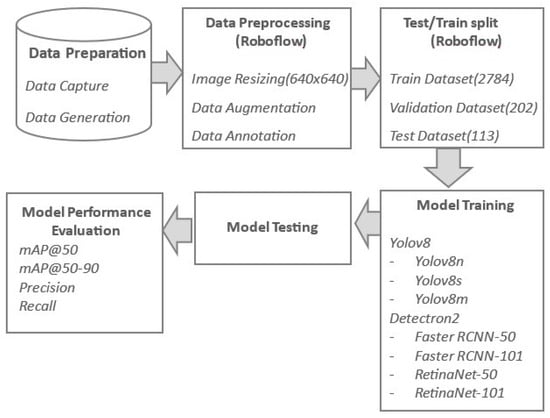

Scoring targets in shooting sports is a crucial and time-consuming task that relies on manually counting bullet holes. This paper introduces an automatic score detection model using object detection techniques. The study contributes to the field of computer vision by comparing the performance of seven models (belonging to two different architectural setups) and by making the dataset publicly available. Another value-added aspect is the inclusion of three variants of the object detection model, YOLOv8, recently released in 2023 (at the time of writing). Five of the used models are single-shot detectors, while two belong to the two-shot detectors category. The dataset was manually captured from the shooting range and expanded by generating more versatile data using Python code. Before the dataset was trained to develop models, it was resized (640 × 640) and augmented using Roboflow API. The trained models were then assessed on the test dataset, and their performance was compared using matrices like mAP50, mAP50-90, precision, and recall. The results showed that YOLOv8 models can detect multiple objects with good confidence scores. Among these models, YOLOv8m performed the best, with the highest mAP50 value of 96.7%, followed by the performance of YOLOv8s with the mAP50 value of 96.5%. It is suggested that if the system is to be implemented in a real-time environment, YOLOv8s is a better choice since it took significantly less inference time (2.3 ms) than YOLOv8m (5.7 ms) and yet generated a competitive mAP50 of 96.5%.

Full article

(This article belongs to the Topic Advances in Artificial Neural Networks)

►▼

Show Figures

Figure 1

Open AccessReview

Data Science in Finance: Challenges and Opportunities

AI 2024, 5(1), 55-71; https://doi.org/10.3390/ai5010004 - 22 Dec 2023

Abstract

Data science has become increasingly popular due to emerging technologies, including generative AI, big data, deep learning, etc. It can provide insights from data that are hard to determine from a human perspective. Data science in finance helps to provide more personal and

[...] Read more.

Data science has become increasingly popular due to emerging technologies, including generative AI, big data, deep learning, etc. It can provide insights from data that are hard to determine from a human perspective. Data science in finance helps to provide more personal and safer experiences for customers and develop cutting-edge solutions for a company. This paper surveys the challenges and opportunities in applying data science to finance. It provides a state-of-the-art review of financial technologies, algorithmic trading, and fraud detection. Also, the paper identifies two research topics. One is how to use generative AI in algorithmic trading. The other is how to apply it to fraud detection. Last but not least, the paper discusses the challenges posed by generative AI, such as the ethical considerations, potential biases, and data security.

Full article

(This article belongs to the Special Issue Feature Papers for AI)

►▼

Show Figures

Figure 1

Open AccessReview

AI Advancements: Comparison of Innovative Techniques

by

and

AI 2024, 5(1), 38-54; https://doi.org/10.3390/ai5010003 - 20 Dec 2023

Abstract

In recent years, artificial intelligence (AI) has seen remarkable advancements, stretching the limits of what is possible and opening up new frontiers. This comparative review investigates the evolving landscape of AI advancements, providing a thorough exploration of innovative techniques that have shaped the

[...] Read more.

In recent years, artificial intelligence (AI) has seen remarkable advancements, stretching the limits of what is possible and opening up new frontiers. This comparative review investigates the evolving landscape of AI advancements, providing a thorough exploration of innovative techniques that have shaped the field. Beginning with the fundamentals of AI, including traditional machine learning and the transition to data-driven approaches, the narrative progresses through core AI techniques such as reinforcement learning, generative adversarial networks, transfer learning, and neuroevolution. The significance of explainable AI (XAI) is emphasized in this review, which also explores the intersection of quantum computing and AI. The review delves into the potential transformative effects of quantum technologies on AI advancements and highlights the challenges associated with their integration. Ethical considerations in AI, including discussions on bias, fairness, transparency, and regulatory frameworks, are also addressed. This review aims to contribute to a deeper understanding of the rapidly evolving field of AI. Reinforcement learning, generative adversarial networks, and transfer learning lead AI research, with a growing emphasis on transparency. Neuroevolution and quantum AI, though less studied, show potential for future developments.

Full article

(This article belongs to the Special Issue Feature Papers for AI)

►▼

Show Figures

Figure 1

Open AccessArticle

A Time Series Approach to Smart City Transformation: The Problem of Air Pollution in Brescia

by

and

AI 2024, 5(1), 17-37; https://doi.org/10.3390/ai5010002 - 20 Dec 2023

Abstract

Air pollution is a paramount issue, influenced by a combination of natural and anthropogenic sources, various diffusion modes, and profound repercussions for the environment and human health. Herein, the power of time series data becomes evident, as it proves indispensable for capturing pollutant

[...] Read more.

Air pollution is a paramount issue, influenced by a combination of natural and anthropogenic sources, various diffusion modes, and profound repercussions for the environment and human health. Herein, the power of time series data becomes evident, as it proves indispensable for capturing pollutant concentrations over time. These data unveil critical insights, including trends, seasonal and cyclical patterns, and the crucial property of stationarity. Brescia, a town located in Northern Italy, faces the pressing challenge of air pollution. To enhance its status as a smart city and address this concern effectively, statistical methods employed in time series analysis play a pivotal role. This article is dedicated to examining how ARIMA and LSTM models can empower Brescia as a smart city by fitting and forecasting specific pollution forms. These models have established themselves as effective tools for predicting future pollution levels. Notably, the intricate nature of the phenomena becomes apparent through the high variability of particulate matter. Even during extraordinary events like the COVID-19 lockdown, where substantial reductions in emissions were observed, the analysis revealed that this reduction did not proportionally decrease PM

(This article belongs to the Special Issue Feature Papers for AI)

►▼

Show Figures

Figure 1

Open AccessArticle

A Time Window Analysis for Time-Critical Decision Systems with Applications on Sports Climbing

by

and

AI 2024, 5(1), 1-16; https://doi.org/10.3390/ai5010001 - 19 Dec 2023

Abstract

►▼

Show Figures

Human monitoring systems are already utilized in various fields like assisted living, healthcare or sport and fitness. They are able to support in everyday life or act as a pre-warning system. We developed a system to monitor the ascent of a sport climber.

[...] Read more.

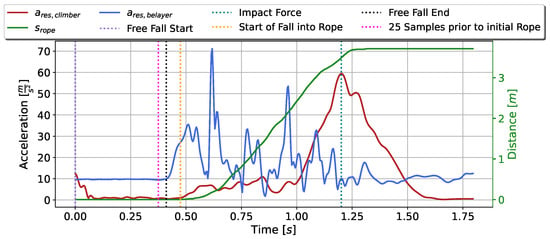

Human monitoring systems are already utilized in various fields like assisted living, healthcare or sport and fitness. They are able to support in everyday life or act as a pre-warning system. We developed a system to monitor the ascent of a sport climber. It is integrated in a belay device. This paper presents the first time series analysis regarding the fall of a climber utilizing such a system. A Convolutional Neural Network handles the feature engineering part of the sensor information as well as the classification of the task at hand. In this way, the time is implicitly considered by the network. An analysis regarding the size of the time window was carried out with a focus on exploring the respective results. The neural network models were then tested against an already-existing principle based on a mechanical mechanism. We show that the size of the time window is a decisive factor in a time critical system. Depending on the size of the window, the mechanical principle was able to outperform the neural network. Nevertheless, most of our models outperformed the basic principle and returned promising results in predicting the fall of a climber within up to

Figure 1

Open AccessArticle

Adapting the Parameters of RBF Networks Using Grammatical Evolution

AI 2023, 4(4), 1059-1078; https://doi.org/10.3390/ai4040054 - 11 Dec 2023

Abstract

►▼

Show Figures

Radial basis function networks are widely used in a multitude of applications in various scientific areas in both classification and data fitting problems. These networks deal with the above problems by adjusting their parameters through various optimization techniques. However, an important issue to

[...] Read more.

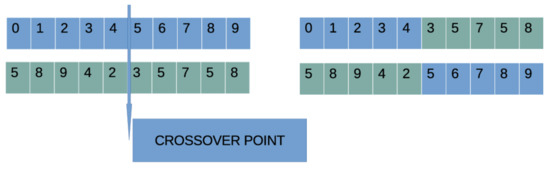

Radial basis function networks are widely used in a multitude of applications in various scientific areas in both classification and data fitting problems. These networks deal with the above problems by adjusting their parameters through various optimization techniques. However, an important issue to address is the need to locate a satisfactory interval for the parameters of a network before adjusting these parameters. This paper proposes a two-stage method. In the first stage, via the incorporation of grammatical evolution, rules are generated to create the optimal value interval of the network parameters. During the second stage of the technique, the mentioned parameters are fine-tuned with a genetic algorithm. The current work was tested on a number of datasets from the recent literature and found to reduce the classification or data fitting error by over 40% on most datasets. In addition, the proposed method appears in the experiments to be robust, as the fluctuation of the number of network parameters does not significantly affect its performance.

Full article

Figure 1

Open AccessArticle

Evaluating the Performance of Automated Machine Learning (AutoML) Tools for Heart Disease Diagnosis and Prediction

AI 2023, 4(4), 1036-1058; https://doi.org/10.3390/ai4040053 - 01 Dec 2023

Cited by 1

Abstract

Globally, over 17 million people annually die from cardiovascular diseases, with heart disease being the leading cause of mortality in the United States. The ever-increasing volume of data related to heart disease opens up possibilities for employing machine learning (ML) techniques in diagnosing

[...] Read more.

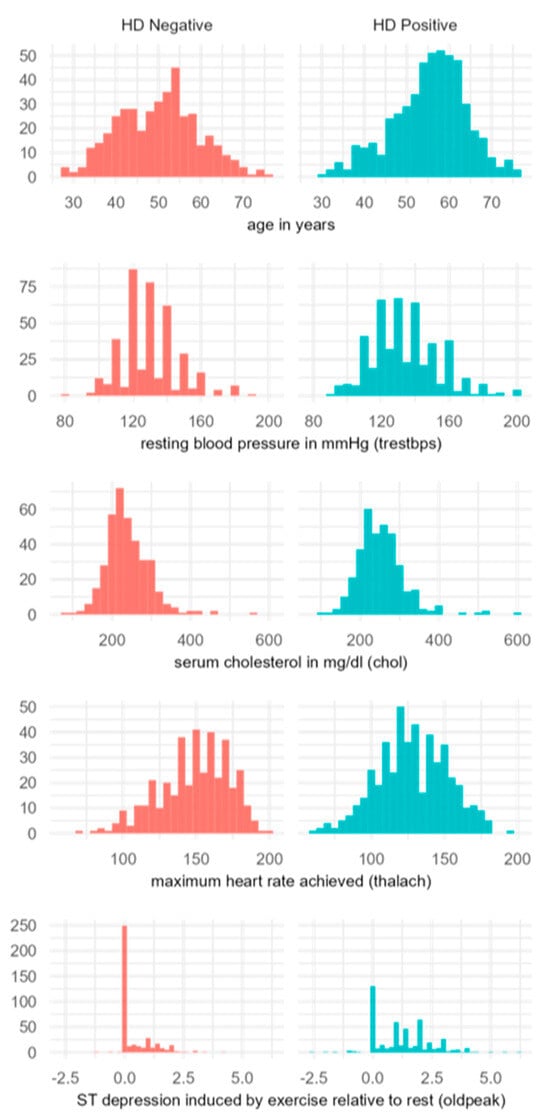

Globally, over 17 million people annually die from cardiovascular diseases, with heart disease being the leading cause of mortality in the United States. The ever-increasing volume of data related to heart disease opens up possibilities for employing machine learning (ML) techniques in diagnosing and predicting heart conditions. While applying ML demands a certain level of computer science expertise—often a barrier for healthcare professionals—automated machine learning (AutoML) tools significantly lower this barrier. They enable users to construct the most effective ML models without in-depth technical knowledge. Despite their potential, there has been a lack of research comparing the performance of different AutoML tools on heart disease data. Addressing this gap, our study evaluates three AutoML tools—PyCaret, AutoGluon, and AutoKeras—against three datasets (Cleveland, Hungarian, and a combined dataset). To evaluate the efficacy of AutoML against conventional machine learning methodologies, we crafted ten machine learning models using the standard practices of exploratory data analysis (EDA), data cleansing, feature engineering, and others, utilizing the sklearn library. Our toolkit included an array of models—logistic regression, support vector machines, decision trees, random forest, and various ensemble models. Employing 5-fold cross-validation, these traditionally developed models demonstrated accuracy rates spanning from 55% to 60%. This performance is markedly inferior to that of AutoML tools, indicating the latter’s superior capability in generating predictive models. Among AutoML tools, AutoGluon emerged as the superior tool, consistently achieving accuracy rates between 78% and 86% across the datasets. PyCaret’s performance varied, with accuracy rates from 65% to 83%, indicating a dependency on the nature of the dataset. AutoKeras showed the most fluctuation in performance, with accuracies ranging from 54% to 83%. Our findings suggest that AutoML tools can simplify the generation of robust ML models that potentially surpass those crafted through traditional ML methodologies. However, we must also consider the limitations of AutoML tools and explore strategies to overcome them. The successful deployment of high-performance ML models designed via AutoML could revolutionize the treatment and prevention of heart disease globally, significantly impacting patient care.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Healthcare: Current State and Future Perspectives)

►▼

Show Figures

Figure 1

Open AccessEssay

AI and Regulations

AI 2023, 4(4), 1023-1035; https://doi.org/10.3390/ai4040052 - 29 Nov 2023

Abstract

This essay argues that the popular misrepresentation of the nature of AI has important consequences concerning how we view the need for regulations. Considering AI as something that exists in itself, rather than as a set of cognitive technologies whose characteristics—physical, cognitive, and

[...] Read more.

This essay argues that the popular misrepresentation of the nature of AI has important consequences concerning how we view the need for regulations. Considering AI as something that exists in itself, rather than as a set of cognitive technologies whose characteristics—physical, cognitive, and systemic—are quite different from ours (and that, at times, differ widely among the technologies) leads to inefficient approaches to regulation. This paper aims at helping the practitioners of responsible AI to address the way in which the technical aspects of the tools they are developing and promoting directly have important social and political consequences.

Full article

(This article belongs to the Special Issue Standards and Ethics in AI)

Open AccessReview

Chat GPT in Diagnostic Human Pathology: Will It Be Useful to Pathologists? A Preliminary Review with ‘Query Session’ and Future Perspectives

by

, , , , , , , and

AI 2023, 4(4), 1010-1022; https://doi.org/10.3390/ai4040051 - 22 Nov 2023

Cited by 1

Abstract

The advent of Artificial Intelligence (AI) has in just a few years supplied multiple areas of knowledge, including in the medical and scientific fields. An increasing number of AI-based applications have been developed, among which conversational AI has emerged. Regarding the latter, ChatGPT

[...] Read more.

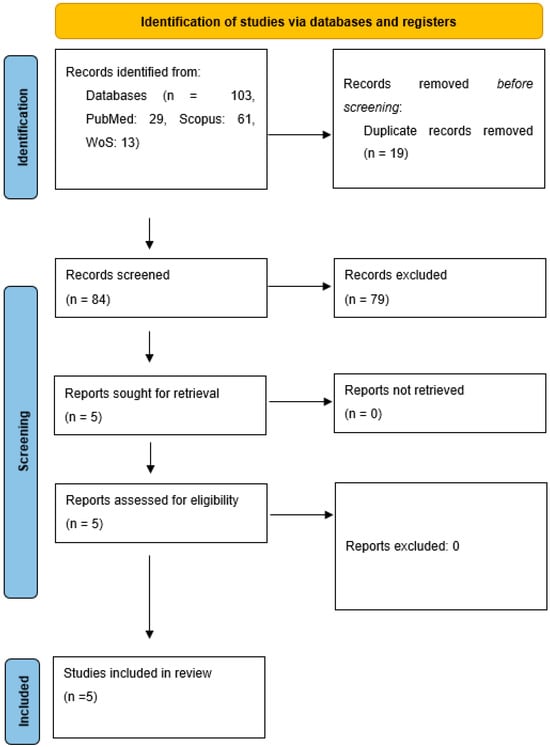

The advent of Artificial Intelligence (AI) has in just a few years supplied multiple areas of knowledge, including in the medical and scientific fields. An increasing number of AI-based applications have been developed, among which conversational AI has emerged. Regarding the latter, ChatGPT has risen to the headlines, scientific and otherwise, for its distinct propensity to simulate a ‘real’ discussion with its interlocutor, based on appropriate prompts. Although several clinical studies using ChatGPT have already been published in the literature, very little has yet been written about its potential application in human pathology. We conduct a systematic review following the Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) guidelines, using PubMed, Scopus and the Web of Science (WoS) as databases, with the following keywords: ChatGPT OR Chat GPT, in combination with each of the following: pathology, diagnostic pathology, anatomic pathology, before 31 July 2023. A total of 103 records were initially identified in the literature search, of which 19 were duplicates. After screening for eligibility and inclusion criteria, only five publications were ultimately included. The majority of publications were original articles (n = 2), followed by a case report (n = 1), letter to the editor (n = 1) and review (n = 1). Furthermore, we performed a ‘query session’ with ChatGPT regarding pathologies such as pigmented skin lesions, malignant melanoma and variants, Gleason’s score of prostate adenocarcinoma, differential diagnosis between germ cell tumors and high grade serous carcinoma of the ovary, pleural mesothelioma and pediatric diffuse midline glioma. Although the premises are exciting and ChatGPT is able to co-advise the pathologist in providing large amounts of scientific data for use in routine microscopic diagnostic practice, there are many limitations (such as data of training, amount of data available, ‘hallucination’ phenomena) that need to be addressed and resolved, with the caveat that an AI-driven system should always provide support and never a decision-making motive during the histopathological diagnostic process.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Healthcare: Current State and Future Perspectives)

►▼

Show Figures

Figure 1

Open AccessArticle

Enhancing Tuta absoluta Detection on Tomato Plants: Ensemble Techniques and Deep Learning

AI 2023, 4(4), 996-1009; https://doi.org/10.3390/ai4040050 - 20 Nov 2023

Abstract

Early detection and efficient management practices to control Tuta absoluta (Meyrick) infestation is crucial for safeguarding tomato production yield and minimizing economic losses. This study investigates the detection of T. absoluta infestation on tomato plants using object detection models combined with ensemble techniques.

[...] Read more.

Early detection and efficient management practices to control Tuta absoluta (Meyrick) infestation is crucial for safeguarding tomato production yield and minimizing economic losses. This study investigates the detection of T. absoluta infestation on tomato plants using object detection models combined with ensemble techniques. Additionally, this study highlights the importance of utilizing a dataset captured in real settings in open-field and greenhouse environments to address the complexity of real-life challenges in object detection of plant health scenarios. The effectiveness of deep-learning-based models, including Faster R-CNN and RetinaNet, was evaluated in terms of detecting T. absoluta damage. The initial model evaluations revealed diminishing performance levels across various model configurations, including different backbones and heads. To enhance detection predictions and improve mean Average Precision (mAP) scores, ensemble techniques were applied such as Non-Maximum Suppression (NMS), Soft Non-Maximum Suppression (Soft NMS), Non-Maximum Weighted (NMW), and Weighted Boxes Fusion (WBF). The outcomes shown that the WBF technique significantly improved the mAP scores, resulting in a 20% improvement from 0.58 (max mAP from individual models) to 0.70. The results of this study contribute to the field of agricultural pest detection by emphasizing the potential of deep learning and ensemble techniques in improving the accuracy and reliability of object detection models.

Full article

(This article belongs to the Special Issue Artificial Intelligence in Agriculture)

►▼

Show Figures

Figure 1

Open AccessArticle

Who Needs External References?—Text Summarization Evaluation Using Original Documents

by

and

AI 2023, 4(4), 970-995; https://doi.org/10.3390/ai4040049 - 15 Nov 2023

Abstract

►▼

Show Figures

Nowadays, individuals can be overwhelmed by a huge number of documents being present in daily life. Capturing the necessary details is often a challenge. Therefore, it is rather important to summarize documents to obtain the main information quickly. There currently exist automatic approaches

[...] Read more.

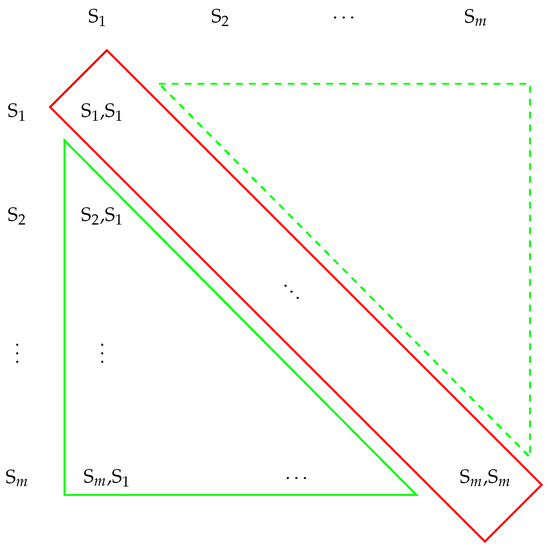

Nowadays, individuals can be overwhelmed by a huge number of documents being present in daily life. Capturing the necessary details is often a challenge. Therefore, it is rather important to summarize documents to obtain the main information quickly. There currently exist automatic approaches to this task, but their quality is often not properly assessed. State-of-the-art metrics rely on human-generated summaries as a reference for the evaluation. If no reference is given, the assessment will be challenging. Therefore, in the absence of human-generated reference summaries, we investigated an alternative approach to how machine-generated summaries can be evaluated. For this, we focus on the original text or document to retrieve a metric that allows a direct evaluation of automatically generated summaries. This approach is particularly helpful in cases where it is difficult or costly to find reference summaries. In this paper, we present a novel metric called Summary Score without Reference—SUSWIR—which is based on four factors already known in the text summarization community: Semantic Similarity, Redundancy, Relevance, and Bias Avoidance Analysis, overcoming drawbacks of common metrics. Therefore, we aim to close a gap in the current evaluation environment for machine-generated text summaries. The novel metric is introduced theoretically and tested on five datasets from their respective domains. The conducted experiments yielded noteworthy outcomes, employing the utilization of SUSWIR.

Full article

Figure 1

Open AccessArticle

Chatbots Put to the Test in Math and Logic Problems: A Comparison and Assessment of ChatGPT-3.5, ChatGPT-4, and Google Bard

AI 2023, 4(4), 949-969; https://doi.org/10.3390/ai4040048 - 24 Oct 2023

Cited by 1

Abstract

In an age where artificial intelligence is reshaping the landscape of education and problem solving, our study unveils the secrets behind three digital wizards, ChatGPT-3.5, ChatGPT-4, and Google Bard, as they engage in a thrilling showdown of mathematical and logical prowess. We assess

[...] Read more.

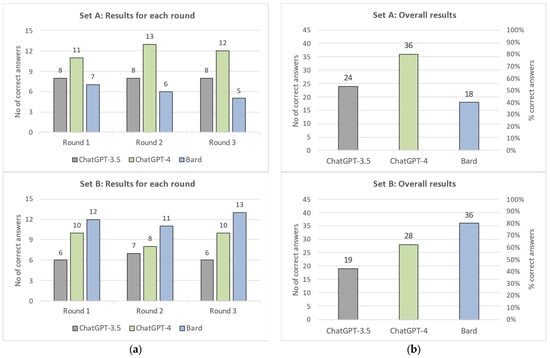

In an age where artificial intelligence is reshaping the landscape of education and problem solving, our study unveils the secrets behind three digital wizards, ChatGPT-3.5, ChatGPT-4, and Google Bard, as they engage in a thrilling showdown of mathematical and logical prowess. We assess the ability of the chatbots to understand the given problem, employ appropriate algorithms or methods to solve it, and generate coherent responses with correct answers. We conducted our study using a set of 30 questions. These questions were carefully crafted to be clear, unambiguous, and fully described using plain text only. Each question has a unique and well-defined correct answer. The questions were divided into two sets of 15: Set A consists of “Original” problems that cannot be found online, while Set B includes “Published” problems that are readily available online, often with their solutions. Each question was presented to each chatbot three times in May 2023. We recorded and analyzed their responses, highlighting their strengths and weaknesses. Our findings indicate that chatbots can provide accurate solutions for straightforward arithmetic, algebraic expressions, and basic logic puzzles, although they may not be consistently accurate in every attempt. However, for more complex mathematical problems or advanced logic tasks, the chatbots’ answers, although they appear convincing, may not be reliable. Furthermore, consistency is a concern as chatbots often provide conflicting answers when presented with the same question multiple times. To evaluate and compare the performance of the three chatbots, we conducted a quantitative analysis by scoring their final answers based on correctness. Our results show that ChatGPT-4 performs better than ChatGPT-3.5 in both sets of questions. Bard ranks third in the original questions of Set A, trailing behind the other two chatbots. However, Bard achieves the best performance, taking first place in the published questions of Set B. This is likely due to Bard’s direct access to the internet, unlike the ChatGPT chatbots, which, due to their designs, do not have external communication capabilities.

Full article

(This article belongs to the Topic AI Chatbots: Threat or Opportunity?)

►▼

Show Figures

Figure 1

Open AccessArticle

Deep Learning Performance Characterization on GPUs for Various Quantization Frameworks

AI 2023, 4(4), 926-948; https://doi.org/10.3390/ai4040047 - 18 Oct 2023

Abstract

Deep learning is employed in many applications, such as computer vision, natural language processing, robotics, and recommender systems. Large and complex neural networks lead to high accuracy; however, they adversely affect many aspects of deep learning performance, such as training time, latency, throughput,

[...] Read more.

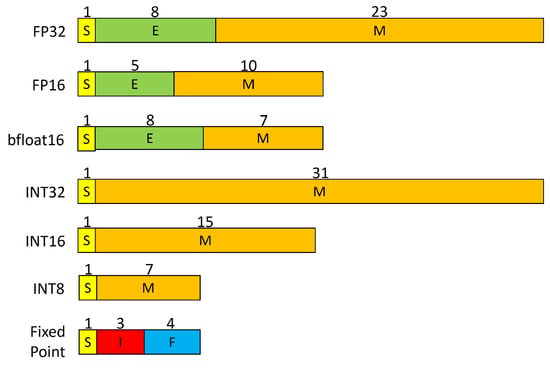

Deep learning is employed in many applications, such as computer vision, natural language processing, robotics, and recommender systems. Large and complex neural networks lead to high accuracy; however, they adversely affect many aspects of deep learning performance, such as training time, latency, throughput, energy consumption, and memory usage in the training and inference stages. To solve these challenges, various optimization techniques and frameworks have been developed for the efficient performance of deep learning models in the training and inference stages. Although optimization techniques such as quantization have been studied thoroughly in the past, less work has been done to study the performance of frameworks that provide quantization techniques. In this paper, we have used different performance metrics to study the performance of various quantization frameworks, including TensorFlow automatic mixed precision and TensorRT. These performance metrics include training time and memory utilization in the training stage along with latency and throughput for graphics processing units (GPUs) in the inference stage. We have applied the automatic mixed precision (AMP) technique during the training stage using the TensorFlow framework, while for inference we have utilized the TensorRT framework for the post-training quantization technique using the TensorFlow TensorRT (TF-TRT) application programming interface (API).We performed model profiling for different deep learning models, datasets, image sizes, and batch sizes for both the training and inference stages, the results of which can help developers and researchers to devise and deploy efficient deep learning models for GPUs.

Full article

(This article belongs to the Special Issue Artificial Intelligence-Based Image Processing and Computer Vision)

►▼

Show Figures

Figure 1

Open AccessArticle

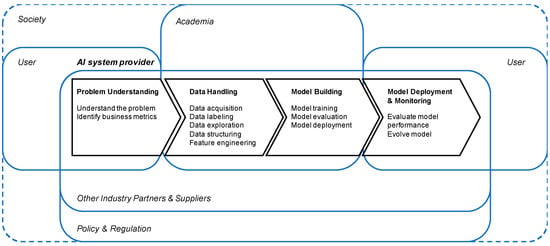

From Trustworthy Principles to a Trustworthy Development Process: The Need and Elements of Trusted Development of AI Systems

by

and

AI 2023, 4(4), 904-925; https://doi.org/10.3390/ai4040046 - 13 Oct 2023

Abstract

The current endeavor of moving AI ethics from theory to practice can frequently be observed in academia and industry and indicates a major achievement in the theoretical understanding of responsible AI. Its practical application, however, currently poses challenges, as mechanisms for translating the

[...] Read more.

The current endeavor of moving AI ethics from theory to practice can frequently be observed in academia and industry and indicates a major achievement in the theoretical understanding of responsible AI. Its practical application, however, currently poses challenges, as mechanisms for translating the proposed principles into easily feasible actions are often considered unclear and not ready for practice. In particular, a lack of uniform, standardized approaches that are aligned with regulatory provisions is often highlighted by practitioners as a major drawback to the practical realization of AI governance. To address these challenges, we propose a stronger shift in focus from solely the trustworthiness of AI products to the perceived trustworthiness of the development process by introducing a concept for a trustworthy development process for AI systems. We derive this process from a semi-systematic literature analysis of common AI governance documents to identify the most prominent measures for operationalizing responsible AI and compare them to implications for AI providers from EU-centered regulatory frameworks. Assessing the resulting process along derived characteristics of trustworthy processes shows that, while clarity is often mentioned as a major drawback, and many AI providers tend to wait for finalized regulations before reacting, the summarized landscape of proposed AI governance mechanisms can already cover many of the binding and non-binding demands circulating similar activities to address fundamental risks. Furthermore, while many factors of procedural trustworthiness are already fulfilled, limitations are seen particularly due to the vagueness of currently proposed measures, calling for a detailing of measures based on use cases and the system’s context.

Full article

(This article belongs to the Special Issue Standards and Ethics in AI)

►▼

Show Figures

Figure 1

Open AccessConcept Paper

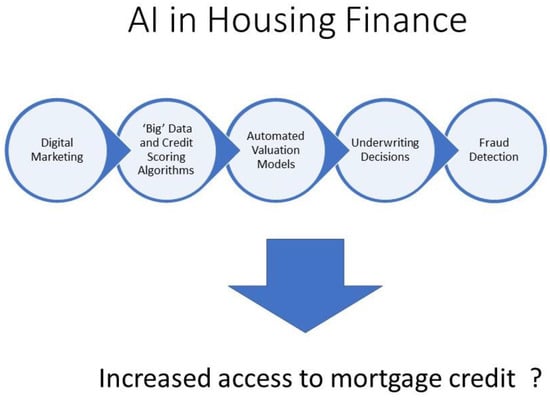

Algorithms for All: Can AI in the Mortgage Market Expand Access to Homeownership?

AI 2023, 4(4), 888-903; https://doi.org/10.3390/ai4040045 - 11 Oct 2023

Cited by 1

Abstract

Artificial intelligence (AI) is transforming the mortgage market at every stage of the value chain. In this paper, we examine the potential for the mortgage industry to leverage AI to overcome the historical and systemic barriers to homeownership for members of Black, Brown,

[...] Read more.

Artificial intelligence (AI) is transforming the mortgage market at every stage of the value chain. In this paper, we examine the potential for the mortgage industry to leverage AI to overcome the historical and systemic barriers to homeownership for members of Black, Brown, and lower-income communities. We begin by proposing societal, ethical, legal, and practical criteria that should be considered in the development and implementation of AI models. Based on this framework, we discuss the applications of AI that are transforming the mortgage market, including digital marketing, the inclusion of non-traditional “big data” in credit scoring algorithms, AI property valuation, and loan underwriting models. We conclude that although the current AI models may reflect the same biases that have existed historically in the mortgage market, opportunities exist for proactive, responsible AI model development designed to remove the systemic barriers to mortgage credit access.

Full article

(This article belongs to the Special Issue Standards and Ethics in AI)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

AI, Applied Sciences, Electronics, IJGI, Remote Sensing, Robotics, Sensors

Artificial Intelligence in Navigation

Topic Editors: Arpad Barsi, Eliseo ClementiniDeadline: 20 January 2024

Topic in

AI, Algorithms, Applied Sciences, Energies, JNE

Intelligent, Explainable and Trustworthy AI for Advanced Nuclear and Sustainable Energy Systems

Topic Editors: Dinesh Kumar, Syed Bahauddin AlamDeadline: 31 January 2024

Topic in

AI, BDCC, Economies, IJFS, JTAER, Sustainability

Artificial Intelligence Applications in Financial Technology

Topic Editors: Albert Y.S. Lam, Yanhui GengDeadline: 1 March 2024

Topic in

Applied Sciences, Bioengineering, Healthcare, IJERPH, JCM, AI

Artificial Intelligence in Public Health: Current Trends and Future Possibilities

Topic Editors: Daniele Giansanti, Giovanni CostantiniDeadline: 31 March 2024

Conferences

Special Issues

Special Issue in

AI

Deep Reinforcement Learning for Multi-Agent Systems

Guest Editor: Guoxin SuDeadline: 31 January 2024

Special Issue in

AI

Machine Learning for HCI: Cases, Trends and Challenges

Guest Editors: Maria Rigou, Spiros SirmakessisDeadline: 29 February 2024

Special Issue in

AI

Assisted Living of the Elderly: Recent Advances, Systems, and Frameworks

Guest Editor: Nirmalya ThakurDeadline: 15 March 2024

Special Issue in

AI

AI in Finance: Leveraging AI to Transform Financial Services

Guest Editor: Xianrong (Shawn) ZhengDeadline: 30 June 2024

.jpg)