Journal Description

Big Data and Cognitive Computing

Big Data and Cognitive Computing

is an international, peer-reviewed, open access journal on big data and cognitive computing published monthly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q1 (Management Information Systems)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 18.2 days after submission; acceptance to publication is undertaken in 3.9 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.7 (2022)

Latest Articles

A Survey of Incremental Deep Learning for Defect Detection in Manufacturing

Big Data Cogn. Comput. 2024, 8(1), 7; https://doi.org/10.3390/bdcc8010007 - 05 Jan 2024

Abstract

Deep learning based visual cognition has greatly improved the accuracy of defect detection, reducing processing times and increasing product throughput across a variety of manufacturing use cases. There is however a continuing need for rigorous procedures to dynamically update model-based detection methods that

[...] Read more.

Deep learning based visual cognition has greatly improved the accuracy of defect detection, reducing processing times and increasing product throughput across a variety of manufacturing use cases. There is however a continuing need for rigorous procedures to dynamically update model-based detection methods that use sequential streaming during the training phase. This paper reviews how new process, training or validation information is rigorously incorporated in real time when detection exceptions arise during inspection. In particular, consideration is given to how new tasks, classes or decision pathways are added to existing models or datasets in a controlled fashion. An analysis of studies from the incremental learning literature is presented, where the emphasis is on the mitigation of process complexity challenges such as, catastrophic forgetting. Further, practical implementation issues that are known to affect the complexity of deep learning model architecture, including memory allocation for incoming sequential data or incremental learning accuracy, is considered. The paper highlights case study results and methods that have been used to successfully mitigate such real-time manufacturing challenges.

Full article

(This article belongs to the Topic Electronic Communications, IOT and Big Data)

►

Show Figures

Open AccessArticle

Enhancing Credit Card Fraud Detection: An Ensemble Machine Learning Approach

Big Data Cogn. Comput. 2024, 8(1), 6; https://doi.org/10.3390/bdcc8010006 - 03 Jan 2024

Abstract

►▼

Show Figures

In the era of digital advancements, the escalation of credit card fraud necessitates the development of robust and efficient fraud detection systems. This paper delves into the application of machine learning models, specifically focusing on ensemble methods, to enhance credit card fraud detection.

[...] Read more.

In the era of digital advancements, the escalation of credit card fraud necessitates the development of robust and efficient fraud detection systems. This paper delves into the application of machine learning models, specifically focusing on ensemble methods, to enhance credit card fraud detection. Through an extensive review of existing literature, we identified limitations in current fraud detection technologies, including issues like data imbalance, concept drift, false positives/negatives, limited generalisability, and challenges in real-time processing. To address some of these shortcomings, we propose a novel ensemble model that integrates a Support Vector Machine (SVM), K-Nearest Neighbor (KNN), Random Forest (RF), Bagging, and Boosting classifiers. This ensemble model tackles the dataset imbalance problem associated with most credit card datasets by implementing under-sampling and the Synthetic Over-sampling Technique (SMOTE) on some machine learning algorithms. The evaluation of the model utilises a dataset comprising transaction records from European credit card holders, providing a realistic scenario for assessment. The methodology of the proposed model encompasses data pre-processing, feature engineering, model selection, and evaluation, with Google Colab computational capabilities facilitating efficient model training and testing. Comparative analysis between the proposed ensemble model, traditional machine learning methods, and individual classifiers reveals the superior performance of the ensemble in mitigating challenges associated with credit card fraud detection. Across accuracy, precision, recall, and F1-score metrics, the ensemble outperforms existing models. This paper underscores the efficacy of ensemble methods as a valuable tool in the battle against fraudulent transactions. The findings presented lay the groundwork for future advancements in the development of more resilient and adaptive fraud detection systems, which will become crucial as credit card fraud techniques continue to evolve.

Full article

Figure 1

Open AccessArticle

Unveiling Sentiments: A Comprehensive Analysis of Arabic Hajj-Related Tweets from 2017–2022 Utilizing Advanced AI Models

Big Data Cogn. Comput. 2024, 8(1), 5; https://doi.org/10.3390/bdcc8010005 - 02 Jan 2024

Abstract

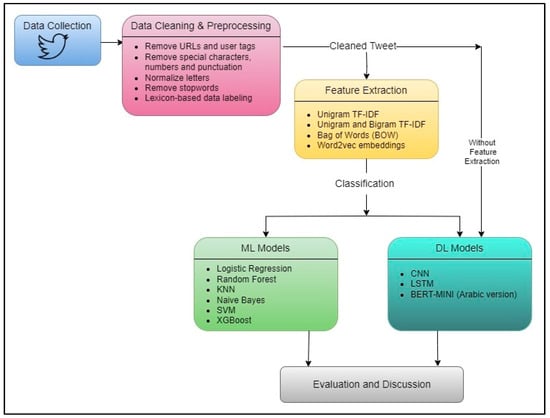

Sentiment analysis plays a crucial role in understanding public opinion and social media trends. It involves analyzing the emotional tone and polarity of a given text. When applied to Arabic text, this task becomes particularly challenging due to the language’s complex morphology, right-to-left

[...] Read more.

Sentiment analysis plays a crucial role in understanding public opinion and social media trends. It involves analyzing the emotional tone and polarity of a given text. When applied to Arabic text, this task becomes particularly challenging due to the language’s complex morphology, right-to-left script, and intricate nuances in expressing emotions. Social media has emerged as a powerful platform for individuals to express their sentiments, especially regarding religious and cultural events. Consequently, studying sentiment analysis in the context of Hajj has become a captivating subject. This research paper presents a comprehensive sentiment analysis of tweets discussing the annual Hajj pilgrimage over a six-year period. By employing a combination of machine learning and deep learning models, this study successfully conducted sentiment analysis on a sizable dataset consisting of Arabic tweets. The process involves pre-processing, feature extraction, and sentiment classification. The objective was to uncover the prevailing sentiments associated with Hajj over different years, before, during, and after each Hajj event. Importantly, the results presented in this study highlight that BERT, an advanced transformer-based model, outperformed other models in accurately classifying sentiment. This underscores its effectiveness in capturing the complexities inherent in Arabic text.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

BNMI-DINA: A Bayesian Cognitive Diagnosis Model for Enhanced Personalized Learning

by

and

Big Data Cogn. Comput. 2024, 8(1), 4; https://doi.org/10.3390/bdcc8010004 - 29 Dec 2023

Abstract

►▼

Show Figures

In the field of education, cognitive diagnosis is crucial for achieving personalized learning. The widely adopted DINA (Deterministic Inputs, Noisy And gate) model uncovers students’ mastery of essential skills necessary to answer questions correctly. However, existing DINA-based approaches overlook the dependency between knowledge

[...] Read more.

In the field of education, cognitive diagnosis is crucial for achieving personalized learning. The widely adopted DINA (Deterministic Inputs, Noisy And gate) model uncovers students’ mastery of essential skills necessary to answer questions correctly. However, existing DINA-based approaches overlook the dependency between knowledge points, and their model training process is computationally inefficient for large datasets. In this paper, we propose a new cognitive diagnosis model called BNMI-DINA, which stands for Bayesian Network-based Multiprocess Incremental DINA. Our proposed model aims to enhance personalized learning by providing accurate and detailed assessments of students’ cognitive abilities. By incorporating a Bayesian network, BNMI-DINA establishes the dependency relationship between knowledge points, enabling more accurate evaluations of students’ mastery levels. To enhance model convergence speed, key steps of our proposed algorithm are parallelized. We also provide theoretical proof of the convergence of BNMI-DINA. Extensive experiments demonstrate that our approach effectively enhances model accuracy and reduces computational time compared to state-of-the-art cognitive diagnosis models.

Full article

Figure 1

Open AccessArticle

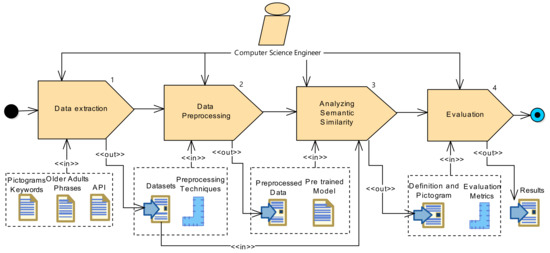

Semantic Similarity of Common Verbal Expressions in Older Adults through a Pre-Trained Model

by

, , , , , and

Big Data Cogn. Comput. 2024, 8(1), 3; https://doi.org/10.3390/bdcc8010003 - 29 Dec 2023

Abstract

Health problems in older adults lead to situations where communication with peers, family and caregivers becomes challenging for seniors; therefore, it is necessary to use alternative methods to facilitate communication. In this context, Augmentative and Alternative Communication (AAC) methods are widely used to

[...] Read more.

Health problems in older adults lead to situations where communication with peers, family and caregivers becomes challenging for seniors; therefore, it is necessary to use alternative methods to facilitate communication. In this context, Augmentative and Alternative Communication (AAC) methods are widely used to support this population segment. Moreover, with Artificial Intelligence (AI), and specifically, machine learning algorithms, AAC can be improved. Although there have been several studies in this field, it is interesting to analyze common phrases used by seniors, depending on their context (i.e., slang and everyday expressions typical of their age). This paper proposes a semantic analysis of the common phrases of older adults and their corresponding meanings through Natural Language Processing (NLP) techniques and a pre-trained language model using semantic textual similarity to represent the older adults’ phrases with their corresponding graphic images (pictograms). The results show good scores achieved in the semantic similarity between the phrases of the older adults and the definitions, so the relationship between the phrase and the pictogram has a high degree of probability.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

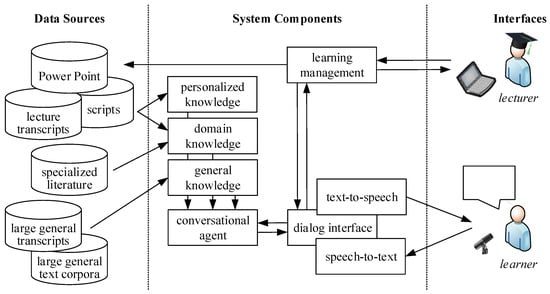

Knowledge-Based and Generative-AI-Driven Pedagogical Conversational Agents: A Comparative Study of Grice’s Cooperative Principles and Trust

Big Data Cogn. Comput. 2024, 8(1), 2; https://doi.org/10.3390/bdcc8010002 - 26 Dec 2023

Abstract

The emergence of generative language models (GLMs), such as OpenAI’s ChatGPT, is changing the way we communicate with computers and has a major impact on the educational landscape. While GLMs have great potential to support education, their use is not unproblematic, as they

[...] Read more.

The emergence of generative language models (GLMs), such as OpenAI’s ChatGPT, is changing the way we communicate with computers and has a major impact on the educational landscape. While GLMs have great potential to support education, their use is not unproblematic, as they suffer from hallucinations and misinformation. In this paper, we investigate how a very limited amount of domain-specific data, from lecture slides and transcripts, can be used to build knowledge-based and generative educational chatbots. We found that knowledge-based chatbots allow full control over the system’s response but lack the verbosity and flexibility of GLMs. The answers provided by GLMs are more trustworthy and offer greater flexibility, but their correctness cannot be guaranteed. Adapting GLMs to domain-specific data trades flexibility for correctness.

Full article

(This article belongs to the Special Issue Artificial Intelligence and Natural Language Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Distributed Bayesian Inference for Large-Scale IoT Systems

by

, , , , and

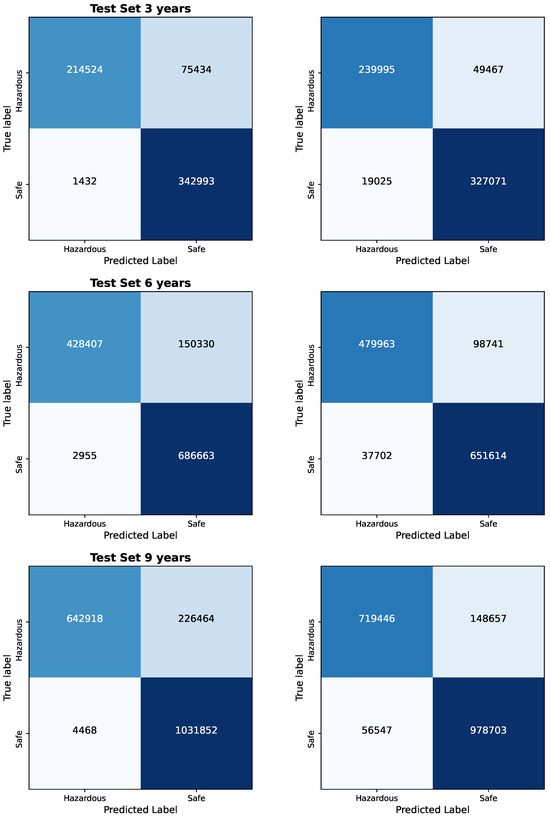

Big Data Cogn. Comput. 2024, 8(1), 1; https://doi.org/10.3390/bdcc8010001 - 19 Dec 2023

Abstract

►▼

Show Figures

In this work, we present a Distributed Bayesian Inference Classifier for Large-Scale Systems, where we assess its performance and scalability on distributed environments such as PySpark. The presented classifier consistently showcases efficient inference time, irrespective of the variations in the size of the

[...] Read more.

In this work, we present a Distributed Bayesian Inference Classifier for Large-Scale Systems, where we assess its performance and scalability on distributed environments such as PySpark. The presented classifier consistently showcases efficient inference time, irrespective of the variations in the size of the test set, implying a robust ability to handle escalating data sizes without a proportional increase in computational demands. Notably, throughout the experiments, there is an observed increase in memory usage with growing test set sizes, this increment is sublinear, demonstrating the proficiency of the classifier in memory resource management. This behavior is consistent with the typical tendencies of PySpark tasks, which witness increasing memory consumption due to data partitioning and various data operations as datasets expand. CPU resource utilization, which is another crucial factor, also remains stable, emphasizing the capability of the classifier to manage larger computational workloads without significant resource strain. From a classification perspective, the Bayesian Logistic Regression Spark Classifier consistently achieves reliable performance metrics, with a particular focus on high specificity, indicating its aptness for applications where pinpointing true negatives is crucial. In summary, based on all experiments conducted under various data sizes, our classifier emerges as a top contender for scalability-driven applications in IoT systems, highlighting its dependable performance, adept resource management, and consistent prediction accuracy.

Full article

Figure 1

Open AccessArticle

Extraction of Significant Features by Fixed-Weight Layer of Processing Elements for the Development of an Efficient Spiking Neural Network Classifier

by

, , , , and

Big Data Cogn. Comput. 2023, 7(4), 184; https://doi.org/10.3390/bdcc7040184 - 18 Dec 2023

Abstract

In this paper, we demonstrate that fixed-weight layers generated from random distribution or logistic functions can effectively extract significant features from input data, resulting in high accuracy on a variety of tasks, including Fisher’s Iris, Wisconsin Breast Cancer, and MNIST datasets. We have

[...] Read more.

In this paper, we demonstrate that fixed-weight layers generated from random distribution or logistic functions can effectively extract significant features from input data, resulting in high accuracy on a variety of tasks, including Fisher’s Iris, Wisconsin Breast Cancer, and MNIST datasets. We have observed that logistic functions yield high accuracy with less dispersion in results. We have also assessed the precision of our approach under conditions of minimizing the number of spikes generated in the network. It is practically useful for reducing energy consumption in spiking neural networks. Our findings reveal that the proposed method demonstrates the highest accuracy on Fisher’s iris and MNIST datasets with decoding using logistic regression. Furthermore, they surpass the accuracy of the conventional (non-spiking) approach using only logistic regression in the case of Wisconsin Breast Cancer. We have also investigated the impact of non-stochastic spike generation on accuracy.

Full article

(This article belongs to the Special Issue Computational Intelligence: Spiking Neural Networks)

►▼

Show Figures

Figure 1

Open AccessArticle

An Artificial-Intelligence-Driven Spanish Poetry Classification Framework

Big Data Cogn. Comput. 2023, 7(4), 183; https://doi.org/10.3390/bdcc7040183 - 14 Dec 2023

Abstract

Spain possesses a vast number of poems. Most have features that mean they present significantly different styles. A superficial reading of these poems may confuse readers due to their complexity. Therefore, it is of vital importance to classify the style of the poems

[...] Read more.

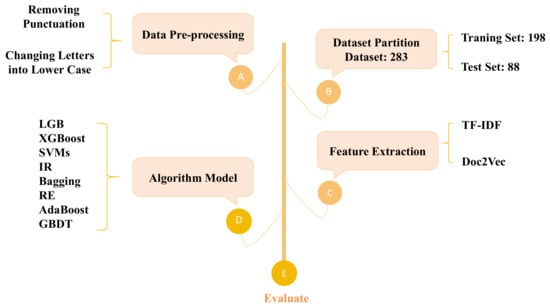

Spain possesses a vast number of poems. Most have features that mean they present significantly different styles. A superficial reading of these poems may confuse readers due to their complexity. Therefore, it is of vital importance to classify the style of the poems in advance. Currently, poetry classification studies are mostly carried out manually, which creates extremely high requirements for the professional quality of classifiers and consumes a large amount of time. Furthermore, the objectivity of the classification cannot be guaranteed because of the influence of the classifier’s subjectivity. To solve these problems, a Spanish poetry classification framework was designed using artificial intelligence technology, which improves the accuracy, efficiency, and objectivity of classification. First, an artificial-intelligence-driven Spanish poetry classification framework is described in detail, and is illustrated by a framework diagram to clearly represent each step in the process. The framework includes many algorithms and models, such as the Term Frequency–Inverse Document Frequency (TF_IDF), Bagging, Support Vector Machines (SVMs), Adaptive Boosting (AdaBoost), logistic regression (LR), Gradient Boosting Decision Trees (GBDT), LightGBM (LGB), eXtreme Gradient Boosting (XGBoost), and Random Forest (RF). The roles of each algorithm in the framework are clearly defined. Finally, experiments were performed for model selection, comparing the results of these algorithms.The Bagging model stood out for its high accuracy, and the experimental results showed that the proposed framework can help researchers carry out poetry research work more efficiently, accurately, and objectively.

Full article

(This article belongs to the Special Issue Artificial Intelligence and Natural Language Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Computers’ Interpretations of Knowledge Representation Using Pre-Conceptual Schemas: An Approach Based on the BERT and Llama 2-Chat Models

Big Data Cogn. Comput. 2023, 7(4), 182; https://doi.org/10.3390/bdcc7040182 - 14 Dec 2023

Abstract

Pre-conceptual schemas are a straightforward way to represent knowledge using controlled language regardless of context. Despite the benefits of using pre-conceptual schemas by humans, they present challenges when interpreted by computers. We propose an approach to making computers able to interpret the basic

[...] Read more.

Pre-conceptual schemas are a straightforward way to represent knowledge using controlled language regardless of context. Despite the benefits of using pre-conceptual schemas by humans, they present challenges when interpreted by computers. We propose an approach to making computers able to interpret the basic pre-conceptual schemas made by humans. To do that, the construction of a linguistic corpus is required to work with large language models—LLM. The linguistic corpus was mainly fed using Master’s and doctoral theses from the digital repository of the University of Nariño to produce a training dataset for re-training the BERT model; in addition, we complement this by explaining the elicited sentences in triads from the pre-conceptual schemas using one of the cutting-edge large language models in natural language processing: Llama 2-Chat by Meta AI. The diverse topics covered in these theses allowed us to expand the spectrum of linguistic use in the BERT model and empower the generative capabilities using the fine-tuned Llama 2-Chat model and the proposed solution. As a result, the first version of a computational solution was built to consume the language models based on BERT and Llama 2-Chat and thus automatically interpret pre-conceptual schemas by computers via natural language processing, adding, at the same time, generative capabilities. The validation of the computational solution was performed in two phases: the first one for detecting sentences and interacting with pre-conceptual schemas with students in the Formal Languages and Automata Theory course—the seventh semester of the systems engineering undergraduate program at the University of Nariño’s Tumaco campus. The second phase was for exploring the generative capabilities based on pre-conceptual schemas; this second phase was performed with students in the Object-oriented Design course—the second semester of the systems engineering undergraduate program at the University of Nariño’s Tumaco campus. This validation yielded favorable results in implementing natural language processing using the BERT and Llama 2-Chat models. In this way, some bases were laid for future developments related to this research topic.

Full article

(This article belongs to the Special Issue Knowledge Representation Formalisms for AI Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Text Classification Based on the Heterogeneous Graph Considering the Relationships between Documents

by

and

Big Data Cogn. Comput. 2023, 7(4), 181; https://doi.org/10.3390/bdcc7040181 - 13 Dec 2023

Abstract

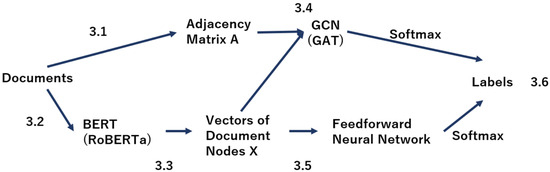

Text classification is the task of estimating the genre of a document based on information such as word co-occurrence and frequency of occurrence. Text classification has been studied by various approaches. In this study, we focused on text classification using graph structure data.

[...] Read more.

Text classification is the task of estimating the genre of a document based on information such as word co-occurrence and frequency of occurrence. Text classification has been studied by various approaches. In this study, we focused on text classification using graph structure data. Conventional graph-based methods express relationships between words and relationships between words and documents as weights between nodes. Then, a graph neural network is used for learning. However, there is a problem that conventional methods are not able to represent the relationship between documents on the graph. In this paper, we propose a graph structure that considers the relationships between documents. In the proposed method, the cosine similarity of document vectors is set as weights between document nodes. This completes a graph that considers the relationship between documents. The graph is then input into a graph convolutional neural network for training. Therefore, the aim of this study is to improve the text classification performance of conventional methods by using this graph that considers the relationships between document nodes. In this study, we conducted evaluation experiments using five different corpora of English documents. The results showed that the proposed method outperformed the performance of the conventional method by up to 1.19%, indicating that the use of relationships between documents is effective. In addition, the proposed method was shown to be particularly effective in classifying long documents.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

Understanding the Influence of Genre-Specific Music Using Network Analysis and Machine Learning Algorithms

by

, , , , and

Big Data Cogn. Comput. 2023, 7(4), 180; https://doi.org/10.3390/bdcc7040180 - 04 Dec 2023

Abstract

►▼

Show Figures

This study analyzes a network of musical influence using machine learning and network analysis techniques. A directed network model is used to represent the influence relations between artists as nodes and edges. Network properties and centrality measures are analyzed to identify influential patterns.

[...] Read more.

This study analyzes a network of musical influence using machine learning and network analysis techniques. A directed network model is used to represent the influence relations between artists as nodes and edges. Network properties and centrality measures are analyzed to identify influential patterns. In addition, influence within and outside the genre is quantified using in-genre and out-genre weights. Regression analysis is performed to determine the impact of musical attributes on influence. We find that speechiness, acousticness, and valence are the top features of the most influential artists. We also introduce the IRDI, an algorithm that provides an innovative approach to quantify an artist’s influence by capturing the degree of dominance among their followers. This approach underscores influential artists who drive the evolution of music, setting trends and significantly inspiring a new generation of artists. The independent cascade model is further employed to open up the temporal dynamics of influence propagation across the entire musical network, highlighting how initial seeds of influence can contagiously spread through the network. This multidisciplinary approach provides a nuanced understanding of musical influence that refines existing methods and sheds light on influential trends and dynamics.

Full article

Figure 1

Open AccessArticle

Toward Morphologic Atlasing of the Human Whole Brain at the Nanoscale

Big Data Cogn. Comput. 2023, 7(4), 179; https://doi.org/10.3390/bdcc7040179 - 01 Dec 2023

Abstract

Although no dataset at the nanoscale for the entire human brain has yet been acquired and neither a nanoscale human whole brain atlas has been constructed, tremendous progress in neuroimaging and high-performance computing makes them feasible in the non-distant future. To construct the

[...] Read more.

Although no dataset at the nanoscale for the entire human brain has yet been acquired and neither a nanoscale human whole brain atlas has been constructed, tremendous progress in neuroimaging and high-performance computing makes them feasible in the non-distant future. To construct the human whole brain nanoscale atlas, there are several challenges, and here, we address two, i.e., the morphology modeling of the brain at the nanoscale and designing of a nanoscale brain atlas. A new nanoscale neuronal format is introduced to describe data necessary and sufficient to model the entire human brain at the nanoscale, enabling calculations of the synaptome and connectome. The design of the nanoscale brain atlas covers design principles, content, architecture, navigation, functionality, and user interface. Three novel design principles are introduced supporting navigation, exploration, and calculations, namely, a gross neuroanatomy-guided navigation of micro/nanoscale neuroanatomy; a movable and zoomable sampling volume of interest for navigation and exploration; and a nanoscale data processing in a parallel-pipeline mode exploiting parallelism resulting from the decomposition of gross neuroanatomy parcellated into structures and regions as well as nano neuroanatomy decomposed into neurons and synapses, enabling the distributed construction and continual enhancement of the nanoscale atlas. Numerous applications of this atlas can be contemplated ranging from proofreading and continual multi-site extension to exploration, morphometric and network-related analyses, and knowledge discovery. To my best knowledge, this is the first proposed neuronal morphology nanoscale model and the first attempt to design a human whole brain atlas at the nanoscale.

Full article

(This article belongs to the Special Issue Big Data System for Global Health)

►▼

Show Figures

Figure 1

Open AccessOpinion

Artificial Intelligence in the Interpretation of Videofluoroscopic Swallow Studies: Implications and Advances for Speech–Language Pathologists

Big Data Cogn. Comput. 2023, 7(4), 178; https://doi.org/10.3390/bdcc7040178 - 28 Nov 2023

Abstract

Radiological imaging is an essential component of a swallowing assessment. Artificial intelligence (AI), especially deep learning (DL) models, has enhanced the efficiency and efficacy through which imaging is interpreted, and subsequently, it has important implications for swallow diagnostics and intervention planning. However, the

[...] Read more.

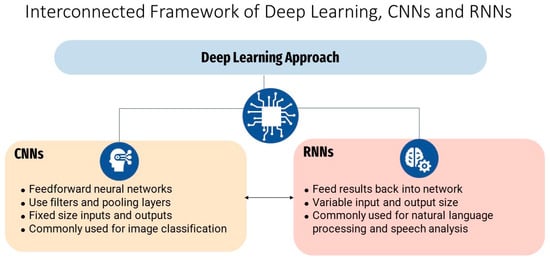

Radiological imaging is an essential component of a swallowing assessment. Artificial intelligence (AI), especially deep learning (DL) models, has enhanced the efficiency and efficacy through which imaging is interpreted, and subsequently, it has important implications for swallow diagnostics and intervention planning. However, the application of AI for the interpretation of videofluoroscopic swallow studies (VFSS) is still emerging. This review showcases the recent literature on the use of AI to interpret VFSS and highlights clinical implications for speech–language pathologists (SLPs). With a surge in AI research, there have been advances in dysphagia assessments. Several studies have demonstrated the successful implementation of DL algorithms to analyze VFSS. Notably, convolutional neural networks (CNNs), which involve training a multi-layered model to recognize specific image or video components, have been used to detect pertinent aspects of the swallowing process with high levels of precision. DL algorithms have the potential to streamline VFSS interpretation, improve efficiency and accuracy, and enable the precise interpretation of an instrumental dysphagia evaluation, which is especially advantageous when access to skilled clinicians is not ubiquitous. By enhancing the precision, speed, and depth of VFSS interpretation, SLPs can obtain a more comprehensive understanding of swallow physiology and deliver a targeted and timely intervention that is tailored towards the individual. This has practical applications for both clinical practice and dysphagia research. As this research area grows and AI technologies progress, the application of DL in the field of VFSS interpretation is clinically beneficial and has the potential to transform dysphagia assessment and management. With broader validation and inter-disciplinary collaborations, AI-augmented VFSS interpretation will likely transform swallow evaluations and ultimately improve outcomes for individuals with dysphagia. However, despite AI’s potential to streamline imaging interpretation, practitioners still need to consider the challenges and limitations of AI implementation, including the need for large training datasets, interpretability and adaptability issues, and the potential for bias.

Full article

(This article belongs to the Collection Machine Learning and Artificial Intelligence for Health Applications on Social Networks)

►▼

Show Figures

Figure 1

Open AccessEditorial

Managing Cybersecurity Threats and Increasing Organizational Resilience

by

and

Big Data Cogn. Comput. 2023, 7(4), 177; https://doi.org/10.3390/bdcc7040177 - 22 Nov 2023

Abstract

Cyber security is high up on the agenda of senior managers in private and public sector organizations and is likely to remain so for the foreseeable future. [...]

Full article

(This article belongs to the Special Issue Managing Cybersecurity Threats and Increasing Organizational Resilience)

Open AccessArticle

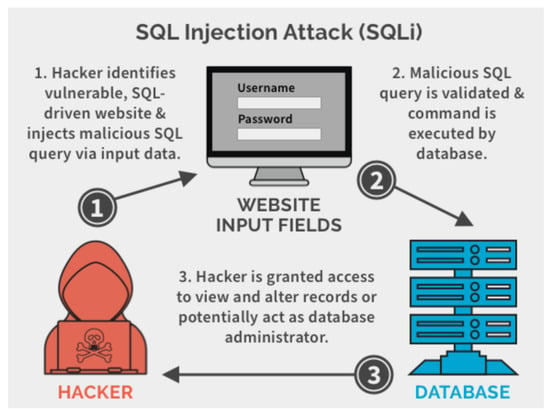

A New Approach to Data Analysis Using Machine Learning for Cybersecurity

by

, , , , , and

Big Data Cogn. Comput. 2023, 7(4), 176; https://doi.org/10.3390/bdcc7040176 - 21 Nov 2023

Abstract

The internet has become an indispensable tool for organizations, permeating every facet of their operations. Virtually all companies leverage Internet services for diverse purposes, including the digital storage of data in databases and cloud platforms. Furthermore, the rising demand for software and applications

[...] Read more.

The internet has become an indispensable tool for organizations, permeating every facet of their operations. Virtually all companies leverage Internet services for diverse purposes, including the digital storage of data in databases and cloud platforms. Furthermore, the rising demand for software and applications has led to a widespread shift toward computer-based activities within the corporate landscape. However, this digital transformation has exposed the information technology (IT) infrastructures of these organizations to a heightened risk of cyber-attacks, endangering sensitive data. Consequently, organizations must identify and address vulnerabilities within their systems, with a primary focus on scrutinizing customer-facing websites and applications. This work aims to tackle this pressing issue by employing data analysis tools, such as Power BI, to assess vulnerabilities within a client’s application or website. Through a rigorous analysis of data, valuable insights and information will be provided, which are necessary to formulate effective remedial measures against potential attacks. Ultimately, the central goal of this research is to demonstrate that clients can establish a secure environment, shielding their digital assets from potential attackers.

Full article

(This article belongs to the Special Issue Artificial Intelligence for Online Safety)

►▼

Show Figures

Figure 1

Open AccessArticle

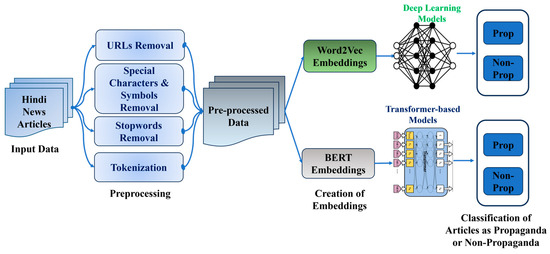

Empowering Propaganda Detection in Resource-Restraint Languages: A Transformer-Based Framework for Classifying Hindi News Articles

Big Data Cogn. Comput. 2023, 7(4), 175; https://doi.org/10.3390/bdcc7040175 - 15 Nov 2023

Cited by 2

Abstract

Misinformation, fake news, and various propaganda techniques are increasingly used in digital media. It becomes challenging to uncover propaganda as it works with the systematic goal of influencing other individuals for the determined ends. While significant research has been reported on propaganda identification

[...] Read more.

Misinformation, fake news, and various propaganda techniques are increasingly used in digital media. It becomes challenging to uncover propaganda as it works with the systematic goal of influencing other individuals for the determined ends. While significant research has been reported on propaganda identification and classification in resource-rich languages such as English, much less effort has been made in resource-deprived languages like Hindi. The spread of propaganda in the Hindi news media has induced our attempt to devise an approach for the propaganda categorization of Hindi news articles. The unavailability of the necessary language tools makes propaganda classification in Hindi more challenging. This study proposes the effective use of deep learning and transformer-based approaches for Hindi computational propaganda classification. To address the lack of pretrained word embeddings in Hindi, Hindi Word2vec embeddings were created using the H-Prop-News corpus for feature extraction. Subsequently, three deep learning models, i.e., CNN (convolutional neural network), LSTM (long short-term memory), Bi-LSTM (bidirectional long short-term memory); and four transformer-based models, i.e., multi-lingual BERT, Distil-BERT, Hindi-BERT, and Hindi-TPU-Electra, were experimented with. The experimental outcomes indicate that the multi-lingual BERT and Hindi-BERT models provide the best performance, with the highest F1 score of 84% on the test data. These results strongly support the efficacy of the proposed solution and indicate its appropriateness for propaganda classification.

Full article

(This article belongs to the Special Issue Advances in Natural Language Processing and Text Mining)

►▼

Show Figures

Figure 1

Open AccessArticle

Optimization of Cryptocurrency Algorithmic Trading Strategies Using the Decomposition Approach

Big Data Cogn. Comput. 2023, 7(4), 174; https://doi.org/10.3390/bdcc7040174 - 14 Nov 2023

Abstract

A cryptocurrency is a non-centralized form of money that facilitates financial transactions using cryptographic processes. It can be thought of as a virtual currency or a payment mechanism for sending and receiving money online. Cryptocurrencies have gained wide market acceptance and rapid development

[...] Read more.

A cryptocurrency is a non-centralized form of money that facilitates financial transactions using cryptographic processes. It can be thought of as a virtual currency or a payment mechanism for sending and receiving money online. Cryptocurrencies have gained wide market acceptance and rapid development during the past few years. Due to the volatile nature of the crypto-market, cryptocurrency trading involves a high level of risk. In this paper, a new normalized decomposition-based, multi-objective particle swarm optimization (N-MOPSO/D) algorithm is presented for cryptocurrency algorithmic trading. The aim of this algorithm is to help traders find the best Litecoin trading strategies that improve their outcomes. The proposed algorithm is used to manage the trade-offs among three objectives: the return on investment, the Sortino ratio, and the number of trades. A hybrid weight assignment mechanism has also been proposed. It was compared against the trading rules with their standard parameters, MOPSO/D, using normalized weighted Tchebycheff scalarization, and MOEA/D. The proposed algorithm could outperform the counterpart algorithms for benchmark and real-world problems. Results showed that the proposed algorithm is very promising and stable under different market conditions. It could maintain the best returns and risk during both training and testing with a moderate number of trades.

Full article

(This article belongs to the Special Issue Applied Data Science for Social Good)

►▼

Show Figures

Figure 1

Open AccessArticle

The Semantic Adjacency Criterion in Time Intervals Mining

Big Data Cogn. Comput. 2023, 7(4), 173; https://doi.org/10.3390/bdcc7040173 - 09 Nov 2023

Abstract

We propose a new pruning constraint when mining frequent temporal patterns to be used as classification and prediction features, the Semantic Adjacency Criterion [SAC], which filters out temporal patterns that contain potentially semantically contradictory components, exploiting each medical domain’s knowledge. We have

[...] Read more.

We propose a new pruning constraint when mining frequent temporal patterns to be used as classification and prediction features, the Semantic Adjacency Criterion [SAC], which filters out temporal patterns that contain potentially semantically contradictory components, exploiting each medical domain’s knowledge. We have defined three SAC versions and tested them within three medical domains (oncology, hepatitis, diabetes) and a frequent-temporal-pattern discovery framework. Previously, we had shown that using SAC enhances the repeatability of discovering the same temporal patterns in similar proportions in different patient groups within the same clinical domain. Here, we focused on SAC’s computational implications for pattern discovery, and for classification and prediction, using the discovered patterns as features, by four different machine-learning methods: Random Forests, Naïve Bayes, SVM, and Logistic Regression. Using SAC resulted in a significant reduction, across all medical domains and classification methods, of up to 97% in the number of discovered temporal patterns, and in the runtime of the discovery process, of up to 98%. Nevertheless, the highly reduced set of only semantically transparent patterns, when used as features, resulted in classification and prediction models whose performance was at least as good as the models resulting from using the complete temporal-pattern set.

Full article

(This article belongs to the Special Issue Data Science in Health Care)

►▼

Show Figures

Figure 1

Open AccessArticle

Evaluation of Short-Term Rockburst Risk Severity Using Machine Learning Methods

Big Data Cogn. Comput. 2023, 7(4), 172; https://doi.org/10.3390/bdcc7040172 - 07 Nov 2023

Abstract

In deep engineering, rockburst hazards frequently result in injuries, fatalities, and the destruction of contiguous structures. Due to the complex nature of rockbursts, predicting the severity of rockburst damage (intensity) without the aid of computer models is challenging. Although there are various predictive

[...] Read more.

In deep engineering, rockburst hazards frequently result in injuries, fatalities, and the destruction of contiguous structures. Due to the complex nature of rockbursts, predicting the severity of rockburst damage (intensity) without the aid of computer models is challenging. Although there are various predictive models in existence, effectively identifying the risk severity in imbalanced data remains crucial. The ensemble boosting method is often better suited to dealing with unequally distributed classes than are classical models. Therefore, this paper employs the ensemble categorical gradient boosting (CGB) method to predict short-term rockburst risk severity. After data collection, principal component analysis (PCA) was employed to avoid the redundancies caused by multi-collinearity. Afterwards, the CGB was trained on PCA data, optimal hyper-parameters were retrieved using the grid-search technique to predict the test samples, and performance was evaluated using precision, recall, and F1 score metrics. The results showed that the PCA-CGB model achieved better results in prediction than did the single CGB model or conventional boosting methods. The model achieved an F1 score of 0.8952, indicating that the proposed model is robust in predicting damage severity given an imbalanced dataset. This work provides practical guidance in risk management.

Full article

(This article belongs to the Special Issue Recent Advances and Applications of Big Data and Distributed Computing Systems)

►▼

Show Figures

Figure 1

Journal Menu

► ▼ Journal Menu-

- BDCC Home

- Aims & Scope

- Editorial Board

- Reviewer Board

- Topical Advisory Panel

- Instructions for Authors

- Special Issues

- Topics

- Topical Collections

- Article Processing Charge

- Indexing & Archiving

- Editor’s Choice Articles

- Most Cited & Viewed

- Journal Statistics

- Journal History

- Journal Awards

- Editorial Office

Journal Browser

► ▼ Journal BrowserHighly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

AI, BDCC, Economies, IJFS, JTAER, Sustainability

Artificial Intelligence Applications in Financial Technology

Topic Editors: Albert Y.S. Lam, Yanhui GengDeadline: 1 March 2024

Topic in

BDCC, Economies, Information, Remote Sensing, Sustainability

Big Data and Artificial Intelligence, 2nd Volume

Topic Editors: Miltiadis D. Lytras, Andreea Claudia SerbanDeadline: 31 March 2024

Topic in

AI, Algorithms, BDCC, Future Internet, Informatics, Information, Languages, Publications

AI Chatbots: Threat or Opportunity?

Topic Editors: Antony Bryant, Roberto Montemanni, Min Chen, Paolo Bellavista, Kenji Suzuki, Jeanine Treffers-DallerDeadline: 30 April 2024

Topic in

Algorithms, BDCC, BioMedInformatics, Information, Mathematics

Machine Learning Empowered Drug Screen

Topic Editors: Teng Zhou, Jiaqi Wang, Youyi SongDeadline: 31 August 2024

Conferences

Special Issues

Special Issue in

BDCC

Deep Network Learning and Its Applications

Guest Editors: Guarino Alfonso, Rocco Zaccagnino, Emiliano Del GobboDeadline: 31 January 2024

Special Issue in

BDCC

Revolutionizing Healthcare: Exploring the Latest Advances in Digital Health Technology

Guest Editors: Hossein Hassani, Steve MacFeelyDeadline: 29 February 2024

Special Issue in

BDCC

Sustainable Big Data Analytics and Machine Learning Technologies

Guest Editor: Jenq-Haur WangDeadline: 15 March 2024

Special Issue in

BDCC

Privacy-Enhancing Technologies of Data for Sustainable and Secure Cooperation

Guest Editors: Yi Sun, Shujie YangDeadline: 30 March 2024

Topical Collections

Topical Collection in

BDCC

Machine Learning and Artificial Intelligence for Health Applications on Social Networks

Collection Editor: Carmela Comito