Journal Description

Econometrics

Econometrics

is an international, peer-reviewed, open access journal on econometric modeling and forecasting, as well as new advances in econometrics theory, and is published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), EconLit, EconBiz, RePEc, and other databases.

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 53.8 days after submission; acceptance to publication is undertaken in 7.2 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

1.5 (2022);

5-Year Impact Factor:

1.7 (2022)

Latest Articles

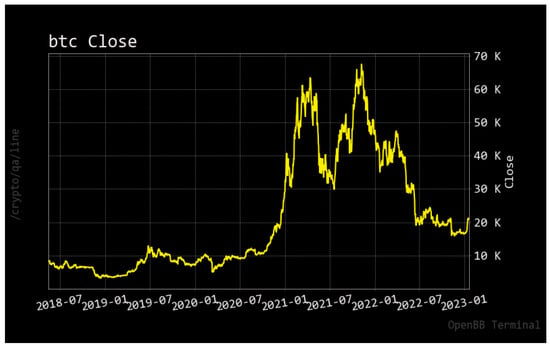

Is Monetary Policy a Driver of Cryptocurrencies? Evidence from a Structural Break GARCH-MIDAS Approach

Econometrics 2024, 12(1), 2; https://doi.org/10.3390/econometrics12010002 - 05 Jan 2024

Abstract

►

Show Figures

The introduction of Bitcoin as a distributed peer-to-peer digital cash in 2008 and its first recorded real transaction in 2010 served the function of a medium of exchange, transforming the financial landscape by offering a decentralized, peer-to-peer alternative to conventional monetary systems. This

[...] Read more.

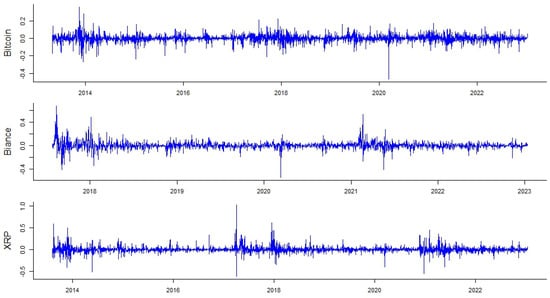

The introduction of Bitcoin as a distributed peer-to-peer digital cash in 2008 and its first recorded real transaction in 2010 served the function of a medium of exchange, transforming the financial landscape by offering a decentralized, peer-to-peer alternative to conventional monetary systems. This study investigates the intricate relationship between cryptocurrencies and monetary policy, with a particular focus on their long-term volatility dynamics. We enhance the GARCH-MIDAS (Mixed Data Sampling) through the adoption of the SB-GARCH-MIDAS (Structural Break Mixed Data Sampling) to analyze the daily returns of three prominent cryptocurrencies (Bitcoin, Binance Coin, and XRP) alongside monthly monetary policy data from the USA and South Africa with respect to potential presence of a structural break in the monetary policy, which provided us with two GARCH-MIDAS models. As of 30 June 2022, the most recent data observation for all samples are noted, although it is essential to acknowledge that the data sample time range varies due to differences in cryptocurrency data accessibility. Our research incorporates model confidence set (MCS) procedures and assesses model performance using various metrics, including AIC, BIC, MSE, and QLIKE, supplemented by comprehensive residual diagnostics. Notably, our analysis reveals that the SB-GARCH-MIDAS model outperforms others in forecasting cryptocurrency volatility. Furthermore, we uncover that, in contrast to their younger counterparts, the long-term volatility of older cryptocurrencies is sensitive to structural breaks in exogenous variables. Our study sheds light on the diversification within the cryptocurrency space, shaped by technological characteristics and temporal considerations, and provides practical insights, emphasizing the importance of incorporating monetary policy in assessing cryptocurrency volatility. The implications of our study extend to portfolio management with dynamic consideration, offering valuable insights for investors and decision-makers, which underscores the significance of considering both cryptocurrency types and the economic context of host countries.

Full article

Open AccessEditorial

Publisher’s Note: Econometrics—A New Era for a Well-Established Journal

by

Econometrics 2024, 12(1), 1; https://doi.org/10.3390/econometrics12010001 - 28 Dec 2023

Abstract

Throughout its lifespan, a journal goes through many phases—and Econometrics (Econometrics Homepage n [...]

Full article

Open AccessArticle

Multistep Forecast Averaging with Stochastic and Deterministic Trends

Econometrics 2023, 11(4), 28; https://doi.org/10.3390/econometrics11040028 - 15 Dec 2023

Abstract

►▼

Show Figures

This paper presents a new approach to constructing multistep combination forecasts in a nonstationary framework with stochastic and deterministic trends. Existing forecast combination approaches in the stationary setup typically target the in-sample asymptotic mean squared error (AMSE), relying on its approximate equivalence with

[...] Read more.

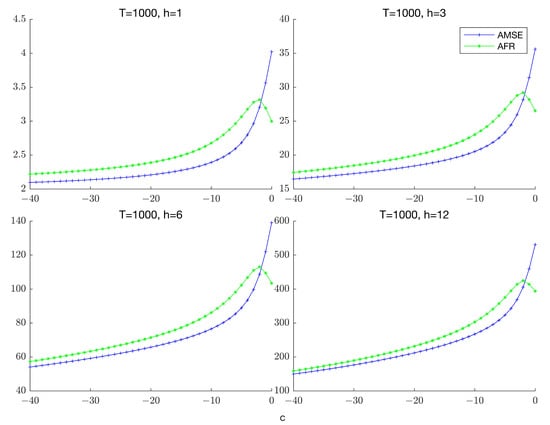

This paper presents a new approach to constructing multistep combination forecasts in a nonstationary framework with stochastic and deterministic trends. Existing forecast combination approaches in the stationary setup typically target the in-sample asymptotic mean squared error (AMSE), relying on its approximate equivalence with the asymptotic forecast risk (AFR). Such equivalence, however, breaks down in a nonstationary setup. This paper develops combination forecasts based on minimizing an accumulated prediction errors (APE) criterion that directly targets the AFR and remains valid whether the time series is stationary or not. We show that the performance of APE-weighted forecasts is close to that of the optimal, infeasible combination forecasts. Simulation experiments are used to demonstrate the finite sample efficacy of the proposed procedure relative to Mallows/Cross-Validation weighting that target the AMSE as well as underscore the importance of accounting for both persistence and lag order uncertainty. An application to forecasting US macroeconomic time series confirms the simulation findings and illustrates the benefits of employing the APE criterion for real as well as nominal variables at both short and long horizons. A practical implication of our analysis is that the degree of persistence can play an important role in the choice of combination weights.

Full article

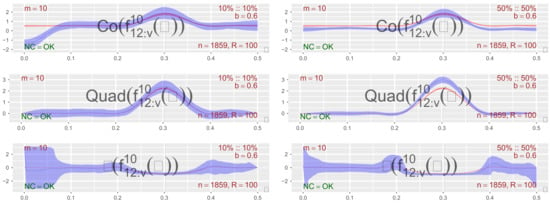

Figure 1

Open AccessArticle

Liquidity and Business Cycles—With Occasional Disruptions

Econometrics 2023, 11(4), 27; https://doi.org/10.3390/econometrics11040027 - 12 Dec 2023

Abstract

►▼

Show Figures

Some financial disruptions that started in California, U.S., in March 2023, resulting in the closure of several medium-size U.S. banks, shed new light on the role of liquidity in business cycle dynamics. In the normal path of the business cycle, liquidity and output

[...] Read more.

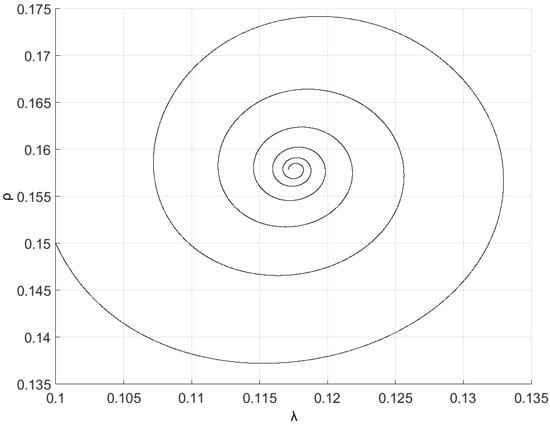

Some financial disruptions that started in California, U.S., in March 2023, resulting in the closure of several medium-size U.S. banks, shed new light on the role of liquidity in business cycle dynamics. In the normal path of the business cycle, liquidity and output mutually interact. Small shocks generally lead to mean reversion through market forces, as a low degree of liquidity dissipation does not significantly disrupt the economic dynamics. However, larger shocks and greater liquidity dissipation arising from runs on financial institutions and contagion effects can trigger tipping points, financial disruptions, and economic downturns. The latter poses severe challenges for Central Banks, which during normal times, usually maintain a hands-off approach with soft regulation and monitoring, allowing the market to operate. However, in severe times of liquidity dissipation, they must swiftly restore liquidity flows and rebuild trust in stability to avoid further disruptions and meltdowns. In this paper, we present a nonlinear model of the liquidity–macro interaction and econometrically explore those types of dynamic features with data from the U.S. economy. Guided by a theoretical model, we use nonlinear econometric methods of a Smooth Transition Regression type to study those features, which provide and suggest further regulation and monitoring guidelines and institutional enforcement of rules.

Full article

Figure 1

Open AccessArticle

When It Counts—Econometric Identification of the Basic Factor Model Based on GLT Structures

Econometrics 2023, 11(4), 26; https://doi.org/10.3390/econometrics11040026 - 20 Nov 2023

Cited by 1

Abstract

Despite the popularity of factor models with simple loading matrices, little attention has been given to formally address the identifiability of these models beyond standard rotation-based identification such as the positive lower triangular (PLT) constraint. To fill this gap, we review the advantages

[...] Read more.

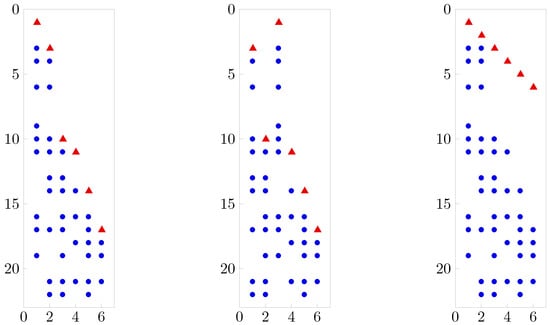

Despite the popularity of factor models with simple loading matrices, little attention has been given to formally address the identifiability of these models beyond standard rotation-based identification such as the positive lower triangular (PLT) constraint. To fill this gap, we review the advantages of variance identification in simple factor analysis and introduce the generalized lower triangular (GLT) structures. We show that the GLT assumption is an improvement over PLT without compromise: GLT is also unique but, unlike PLT, a non-restrictive assumption. Furthermore, we provide a simple counting rule for variance identification under GLT structures, and we demonstrate that within this model class, the unknown number of common factors can be recovered in an exploratory factor analysis. Our methodology is illustrated for simulated data in the context of post-processing posterior draws in sparse Bayesian factor analysis.

Full article

(This article belongs to the Special Issue High-Dimensional Time Series in Macroeconomics and Finance)

►▼

Show Figures

Figure 1

Open AccessArticle

On the Proper Computation of the Hausman Test Statistic in Standard Linear Panel Data Models: Some Clarifications and New Results

Econometrics 2023, 11(4), 25; https://doi.org/10.3390/econometrics11040025 - 08 Nov 2023

Abstract

►▼

Show Figures

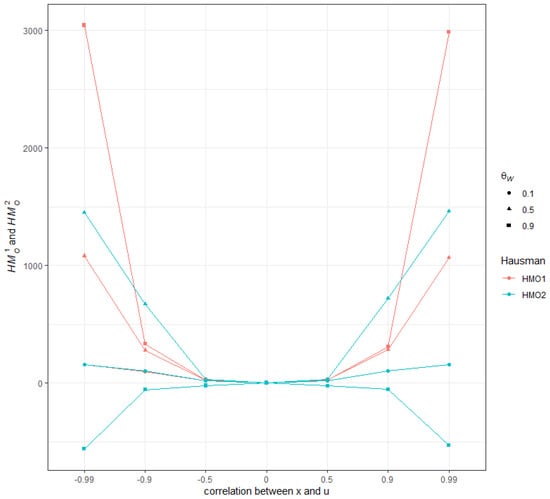

We provide new analytical results for the implementation of the Hausman specification test statistic in a standard panel data model, comparing the version based on the estimators computed from the untransformed random effects model specification under Feasible Generalized Least Squares and the one

[...] Read more.

We provide new analytical results for the implementation of the Hausman specification test statistic in a standard panel data model, comparing the version based on the estimators computed from the untransformed random effects model specification under Feasible Generalized Least Squares and the one computed from the quasi-demeaned model estimated by Ordinary Least Squares. We show that the quasi-demeaned model cannot provide a reliable magnitude when implementing the Hausman test in a finite sample setting, although it is the most common approach used to produce the test statistic in econometric software. The difference between the Hausman statistics computed under the two methods can be substantial and even lead to opposite conclusions for the test of orthogonality between the regressors and the individual-specific effects. Furthermore, this difference remains important even with large cross-sectional dimensions as it mainly depends on the within-between structure of the regressors and on the presence of a significant correlation between the individual effects and the covariates in the data. We propose to supplement the test outcomes that are provided in the main econometric software packages with some metrics to address the issue at hand.

Full article

Figure 1

Open AccessArticle

Dirichlet Process Log Skew-Normal Mixture with a Missing-at-Random-Covariate in Insurance Claim Analysis

Econometrics 2023, 11(4), 24; https://doi.org/10.3390/econometrics11040024 - 12 Oct 2023

Abstract

►▼

Show Figures

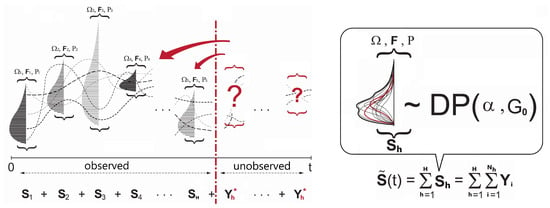

In actuarial practice, the modeling of total losses tied to a certain policy is a nontrivial task due to complex distributional features. In the recent literature, the application of the Dirichlet process mixture for insurance loss has been proposed to eliminate the risk

[...] Read more.

In actuarial practice, the modeling of total losses tied to a certain policy is a nontrivial task due to complex distributional features. In the recent literature, the application of the Dirichlet process mixture for insurance loss has been proposed to eliminate the risk of model misspecification biases. However, the effect of covariates as well as missing covariates in the modeling framework is rarely studied. In this article, we propose novel connections among a covariate-dependent Dirichlet process mixture, log-normal convolution, and missing covariate imputation. As a generative approach, our framework models the joint of outcome and covariates, which allows us to impute missing covariates under the assumption of missingness at random. The performance is assessed by applying our model to several insurance datasets of varying size and data missingness from the literature, and the empirical results demonstrate the benefit of our model compared with the existing actuarial models, such as the Tweedie-based generalized linear model, generalized additive model, or multivariate adaptive regression spline.

Full article

Figure 1

Open AccessArticle

A New Matrix Statistic for the Hausman Endogeneity Test under Heteroskedasticity

Econometrics 2023, 11(4), 23; https://doi.org/10.3390/econometrics11040023 - 10 Oct 2023

Abstract

We derive a new matrix statistic for the Hausman test for endogeneity in cross-sectional Instrumental Variables estimation, that incorporates heteroskedasticity in a natural way and does not use a generalized inverse. A Monte Carlo study examines the performance of the statistic for different

[...] Read more.

We derive a new matrix statistic for the Hausman test for endogeneity in cross-sectional Instrumental Variables estimation, that incorporates heteroskedasticity in a natural way and does not use a generalized inverse. A Monte Carlo study examines the performance of the statistic for different heteroskedasticity-robust variance estimators and different skedastic situations. We find that the test statistic performs well as regards empirical size in almost all cases; however, as regards empirical power, how one corrects for heteroskedasticity matters. We also compare its performance with that of the Wald statistic from the augmented regression setup that is often used for the endogeneity test, and we find that the choice between them may depend on the desired significance level of the test.

Full article

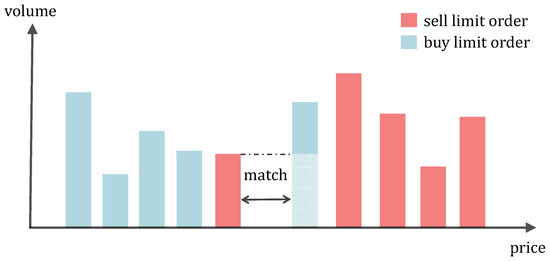

Open AccessArticle

Detecting Pump-and-Dumps with Crypto-Assets: Dealing with Imbalanced Datasets and Insiders’ Anticipated Purchases

by

and

Econometrics 2023, 11(3), 22; https://doi.org/10.3390/econometrics11030022 - 30 Aug 2023

Abstract

►▼

Show Figures

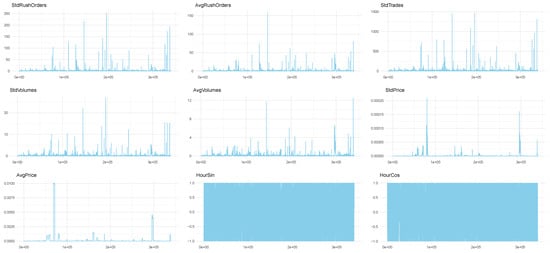

Detecting pump-and-dump schemes involving cryptoassets with high-frequency data is challenging due to imbalanced datasets and the early occurrence of unusual trading volumes. To address these issues, we propose constructing synthetic balanced datasets using resampling methods and flagging a pump-and-dump from the moment of

[...] Read more.

Detecting pump-and-dump schemes involving cryptoassets with high-frequency data is challenging due to imbalanced datasets and the early occurrence of unusual trading volumes. To address these issues, we propose constructing synthetic balanced datasets using resampling methods and flagging a pump-and-dump from the moment of public announcement up to 60 min beforehand. We validated our proposals using data from Pumpolymp and the CryptoCurrency eXchange Trading Library to identify 351 pump signals relative to the Binance crypto exchange in 2021 and 2022. We found that the most effective approach was using the original imbalanced dataset with pump-and-dumps flagged 60 min in advance, together with a random forest model with data segmented into 30-s chunks and regressors computed with a moving window of 1 h. Our analysis revealed that a better balance between sensitivity and specificity could be achieved by simply selecting an appropriate probability threshold, such as setting the threshold close to the observed prevalence in the original dataset. Resampling methods were useful in some cases, but threshold-independent measures were not affected. Moreover, detecting pump-and-dumps in real-time involves high-dimensional data, and the use of resampling methods to build synthetic datasets can be time-consuming, making them less practical.

Full article

Figure A1

Open AccessArticle

Competition–Innovation Nexus: Product vs. Process, Does It Matter?

by

Econometrics 2023, 11(3), 21; https://doi.org/10.3390/econometrics11030021 - 25 Aug 2023

Abstract

I study the relationship between competition and innovation, focusing on the distinction between product and process innovations. By considering product innovation, I expand upon earlier research on the topic of the relationship between competition and innovation, which focused on process innovations. New products

[...] Read more.

I study the relationship between competition and innovation, focusing on the distinction between product and process innovations. By considering product innovation, I expand upon earlier research on the topic of the relationship between competition and innovation, which focused on process innovations. New products allow firms to differentiate themselves from one another. I demonstrate that the competition level that creates the most innovation incentive is higher for process innovation than product innovation. I also provide empirical evidence that supports these results. Using the community innovation survey, I first show that an inverted U-shape characterizes the relationship between competition and both process and product innovations. The optimal competition level for promoting innovation is higher for process innovation.

Full article

Open AccessArticle

Locationally Varying Production Technology and Productivity: The Case of Norwegian Farming

Econometrics 2023, 11(3), 20; https://doi.org/10.3390/econometrics11030020 - 18 Aug 2023

Abstract

►▼

Show Figures

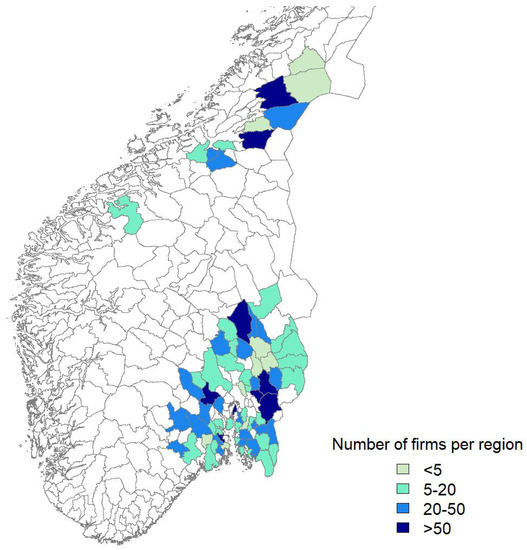

In this study, we leverage geographical coordinates and firm-level panel data to uncover variations in production across different locations. Our approach involves using a semiparametric proxy variable regression estimator, which allows us to define and estimate a customized production function for each firm

[...] Read more.

In this study, we leverage geographical coordinates and firm-level panel data to uncover variations in production across different locations. Our approach involves using a semiparametric proxy variable regression estimator, which allows us to define and estimate a customized production function for each firm and its corresponding location. By employing kernel methods, we estimate the nonparametric functions that determine the model’s parameters based on latitude and longitude. Furthermore, our model incorporates productivity components that consider various factors that influence production. Unlike spatially autoregressive-type production functions that assume a uniform technology across all locations, our approach estimates technology and productivity at both the firm and location levels, taking into account their specific characteristics. To handle endogenous regressors, we incorporate a proxy variable identification technique, distinguishing our method from geographically weighted semiparametric regressions. To investigate the heterogeneity in production technology and productivity among Norwegian grain farmers, we apply our model to a sample of farms using panel data spanning from 2001 to 2020. Through this analysis, we provide empirical evidence of regional variations in both technology and productivity among Norwegian grain farmers. Finally, we discuss the suitability of our approach for addressing the heterogeneity in this industry.

Full article

Figure 1

Open AccessArticle

Tracking ‘Pure’ Systematic Risk with Realized Betas for Bitcoin and Ethereum

by

and

Econometrics 2023, 11(3), 19; https://doi.org/10.3390/econometrics11030019 - 10 Aug 2023

Cited by 1

Abstract

►▼

Show Figures

Using the capital asset pricing model, this article critically assesses the relative importance of computing ‘realized’ betas from high-frequency returns for Bitcoin and Ethereum—the two major cryptocurrencies—against their classic counterparts using the 1-day and 5-day return-based betas. The sample includes intraday data from

[...] Read more.

Using the capital asset pricing model, this article critically assesses the relative importance of computing ‘realized’ betas from high-frequency returns for Bitcoin and Ethereum—the two major cryptocurrencies—against their classic counterparts using the 1-day and 5-day return-based betas. The sample includes intraday data from 15 May 2018 until 17 January 2023. The microstructure noise is present until 4 min in the BTC and ETH high-frequency data. Therefore, we opt for a conservative choice with a 60 min sampling frequency. Considering 250 trading days as a rolling-window size, we obtain rolling betas < 1 for Bitcoin and Ethereum with respect to the CRIX market index, which could enhance portfolio diversification (at the expense of maximizing returns). We flag the minimal tracking errors at the hourly and daily frequencies. The dispersion of rolling betas is higher for the weekly frequency and is concentrated towards values of

Figure 1

Open AccessArticle

Estimation of Realized Asymmetric Stochastic Volatility Models Using Kalman Filter

by

Econometrics 2023, 11(3), 18; https://doi.org/10.3390/econometrics11030018 - 31 Jul 2023

Abstract

Despite the growing interest in realized stochastic volatility models, their estimation techniques, such as simulated maximum likelihood (SML), are computationally intensive. Based on the realized volatility equation, this study demonstrates that, in a finite sample, the quasi-maximum likelihood estimator based on the Kalman

[...] Read more.

Despite the growing interest in realized stochastic volatility models, their estimation techniques, such as simulated maximum likelihood (SML), are computationally intensive. Based on the realized volatility equation, this study demonstrates that, in a finite sample, the quasi-maximum likelihood estimator based on the Kalman filter is competitive with the two-step SML estimator, which is less efficient than the SML estimator. Regarding empirical results for the S&P 500 index, the quasi-likelihood ratio tests favored the two-factor realized asymmetric stochastic volatility model with the standardized t distribution among alternative specifications, and an analysis on out-of-sample forecasts prefers the realized stochastic volatility models, rejecting the model without the realized volatility measure. Furthermore, the forecasts of alternative RSV models are statistically equivalent for the data covering the global financial crisis.

Full article

Open AccessArticle

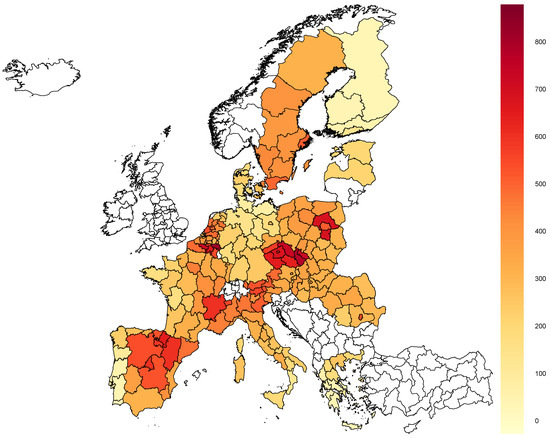

Socio-Economic and Demographic Factors Associated with COVID-19 Mortality in European Regions: Spatial Econometric Analysis

by

and

Econometrics 2023, 11(2), 17; https://doi.org/10.3390/econometrics11020017 - 20 Jun 2023

Abstract

In some NUTS 2 (Nomenclature of Territorial Units for Statistics) regions of Europe, the COVID-19 pandemic has triggered an increase in mortality by several dozen percent and only a few percent in others. Based on the data on 189 regions from 19 European

[...] Read more.

In some NUTS 2 (Nomenclature of Territorial Units for Statistics) regions of Europe, the COVID-19 pandemic has triggered an increase in mortality by several dozen percent and only a few percent in others. Based on the data on 189 regions from 19 European countries, we identified factors responsible for these differences, both intra- and internationally. Due to the spatial nature of the virus diffusion and to account for unobservable country-level and sub-national characteristics, we used spatial econometric tools to estimate two types of models, explaining (i) the number of cases per 10,000 inhabitants and (ii) the percentage increase in the number of deaths compared to the 2016–2019 average in individual regions (mostly NUTS 2) in 2020. We used two weight matrices simultaneously, accounting for both types of spatial autocorrelation: linked to geographical proximity and adherence to the same country. For the feature selection, we used Bayesian Model Averaging. The number of reported cases is negatively correlated with the share of risk groups in the population (60+ years old, older people reporting chronic lower respiratory disease, and high blood pressure) and the level of society’s belief that the positive health effects of restrictions outweighed the economic losses. Furthermore, it positively correlated with GDP per capita (PPS) and the percentage of people employed in the industry. On the contrary, the mortality (per number of infections) has been limited through high-quality healthcare. Additionally, we noticed that the later the pandemic first hit a region, the lower the death toll there was, even controlling for the number of infections.

Full article

(This article belongs to the Special Issue Health Econometrics)

►▼

Show Figures

Figure 1

Open AccessFeature PaperArticle

Skill Mismatch, Nepotism, Job Satisfaction, and Young Females in the MENA Region

Econometrics 2023, 11(2), 16; https://doi.org/10.3390/econometrics11020016 - 12 Jun 2023

Cited by 1

Abstract

Skills utilization is an important factor affecting labor productivity and job satisfaction. This paper examines the effects of skills mismatch, nepotism, and gender discrimination on wages and job satisfaction in MENA workplaces. Gender discrimination implies social costs for firms due to higher turnover

[...] Read more.

Skills utilization is an important factor affecting labor productivity and job satisfaction. This paper examines the effects of skills mismatch, nepotism, and gender discrimination on wages and job satisfaction in MENA workplaces. Gender discrimination implies social costs for firms due to higher turnover rates and lower retention levels. Young females suffer disproportionality from this than their male counterparts, resulting in a wider gender gap in the labor market at multiple levels. Therefore, we find that the skill mismatch problem appears to be more significant among specific demographic groups, such as females, immigrants, and ethnic minorities; it is also negatively correlated with job satisfaction and wages. We bridge the literature gap on youth skill mismatch’s main determinants, including nepotism, by showing evidence from some developing countries. Given the implied social costs associated with these practices and their impact on the labor market, we have compiled a list of policy recommendations that the government and relevant stakeholders should take to reduce these problems in the workplace. Therefore, we provide a guide to address MENA’s skill mismatch and improve overall job satisfaction.

Full article

Open AccessArticle

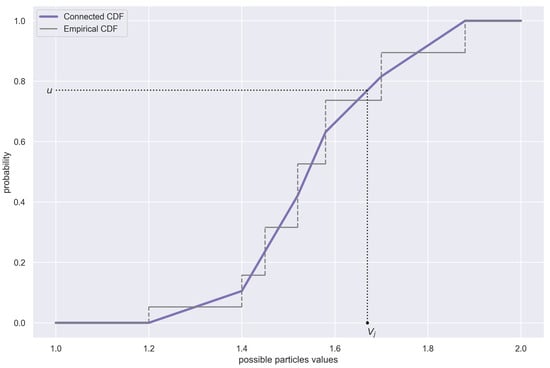

Parameter Estimation of the Heston Volatility Model with Jumps in the Asset Prices

Econometrics 2023, 11(2), 15; https://doi.org/10.3390/econometrics11020015 - 02 Jun 2023

Cited by 1

Abstract

The parametric estimation of stochastic differential equations (SDEs) has been the subject of intense studies already for several decades. The Heston model, for instance, is based on two coupled SDEs and is often used in financial mathematics for the dynamics of asset prices

[...] Read more.

The parametric estimation of stochastic differential equations (SDEs) has been the subject of intense studies already for several decades. The Heston model, for instance, is based on two coupled SDEs and is often used in financial mathematics for the dynamics of asset prices and their volatility. Calibrating it to real data would be very useful in many practical scenarios. It is very challenging, however, since the volatility is not directly observable. In this paper, a complete estimation procedure of the Heston model without and with jumps in the asset prices is presented. Bayesian regression combined with the particle filtering method is used as the estimation framework. Within the framework, we propose a novel approach to handle jumps in order to neutralise their negative impact on the estimates of the key parameters of the model. An improvement in the sampling in the particle filtering method is discussed as well. Our analysis is supported by numerical simulations of the Heston model to investigate the performance of the estimators. In addition, a practical follow-along recipe is given to allow finding adequate estimates from any given data.

Full article

(This article belongs to the Special Issue Topics in Computational Econometrics and Finance: Theory and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Factorization of a Spectral Density with Smooth Eigenvalues of a Multidimensional Stationary Time Series

Econometrics 2023, 11(2), 14; https://doi.org/10.3390/econometrics11020014 - 31 May 2023

Abstract

The aim of this paper to give a multidimensional version of the classical one-dimensional case of smooth spectral density. A spectral density with smooth eigenvalues and

The aim of this paper to give a multidimensional version of the classical one-dimensional case of smooth spectral density. A spectral density with smooth eigenvalues and

(This article belongs to the Special Issue High-Dimensional Time Series in Macroeconomics and Finance)

Open AccessArticle

Online Hybrid Neural Network for Stock Price Prediction: A Case Study of High-Frequency Stock Trading in the Chinese Market

Econometrics 2023, 11(2), 13; https://doi.org/10.3390/econometrics11020013 - 18 May 2023

Cited by 1

Abstract

►▼

Show Figures

Time-series data, which exhibit a low signal-to-noise ratio, non-stationarity, and non-linearity, are commonly seen in high-frequency stock trading, where the objective is to increase the likelihood of profit by taking advantage of tiny discrepancies in prices and trading on them quickly and in

[...] Read more.

Time-series data, which exhibit a low signal-to-noise ratio, non-stationarity, and non-linearity, are commonly seen in high-frequency stock trading, where the objective is to increase the likelihood of profit by taking advantage of tiny discrepancies in prices and trading on them quickly and in huge quantities. For this purpose, it is essential to apply a trading method that is capable of fast and accurate prediction from such time-series data. In this paper, we developed an online time series forecasting method for high-frequency trading (HFT) by integrating three neural network deep learning models, i.e., long short-term memory (LSTM), gated recurrent unit (GRU), and transformer; and we abbreviate the new method to online LGT or O-LGT. The key innovation underlying our method is its efficient storage management, which enables super-fast computing. Specifically, when computing the forecast for the immediate future, we only use the output calculated from the previous trading data (rather than the previous trading data themselves) together with the current trading data. Thus, the computing only involves updating the current data into the process. We evaluated the performance of O-LGT by analyzing high-frequency limit order book (LOB) data from the Chinese market. It shows that, in most cases, our model achieves a similar speed with a much higher accuracy than the conventional fast supervised learning models for HFT. However, with a slight sacrifice in accuracy, O-LGT is approximately 12 to 64 times faster than the existing high-accuracy neural network models for LOB data from the Chinese market.

Full article

Figure 1

Open AccessArticle

Local Gaussian Cross-Spectrum Analysis

by

and

Econometrics 2023, 11(2), 12; https://doi.org/10.3390/econometrics11020012 - 21 Apr 2023

Abstract

The ordinary spectrum is restricted in its applications, since it is based on the second-order moments (auto- and cross-covariances). Alternative approaches to spectrum analysis have been investigated based on other measures of dependence. One such approach was developed for univariate time series by

[...] Read more.

The ordinary spectrum is restricted in its applications, since it is based on the second-order moments (auto- and cross-covariances). Alternative approaches to spectrum analysis have been investigated based on other measures of dependence. One such approach was developed for univariate time series by the authors of this paper using the local Gaussian auto-spectrum based on the local Gaussian auto-correlations. This makes it possible to detect local structures in univariate time series that look similar to white noise when investigated by the ordinary auto-spectrum. In this paper, the local Gaussian approach is extended to a local Gaussian cross-spectrum for multivariate time series. The local Gaussian cross-spectrum has the desirable property that it coincides with the ordinary cross-spectrum for Gaussian time series, which implies that it can be used to detect non-Gaussian traits in the time series under investigation. In particular, if the ordinary spectrum is flat, then peaks and troughs of the local Gaussian spectrum can indicate nonlinear traits, which potentially might reveal local periodic phenomena that are undetected in an ordinary spectral analysis.

Full article

(This article belongs to the Special Issue Topics in Computational Econometrics and Finance: Theory and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Information-Criterion-Based Lag Length Selection in Vector Autoregressive Approximations for I(2) Processes

Econometrics 2023, 11(2), 11; https://doi.org/10.3390/econometrics11020011 - 20 Apr 2023

Abstract

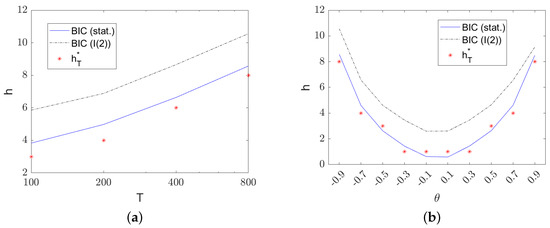

►▼

Show Figures

When using vector autoregressive (VAR) models for approximating time series, a key step is the selection of the lag length. Often this is performed using information criteria, even if a theoretical justification is lacking in some cases. For stationary processes, the asymptotic properties

[...] Read more.

When using vector autoregressive (VAR) models for approximating time series, a key step is the selection of the lag length. Often this is performed using information criteria, even if a theoretical justification is lacking in some cases. For stationary processes, the asymptotic properties of the corresponding estimators are well documented in great generality in the book Hannan and Deistler (1988). If the data-generating process is not a finite-order VAR, the selected lag length typically tends to infinity as a function of the sample size. For invertible vector autoregressive moving average (VARMA) processes, this typically happens roughly proportional to

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Conferences

Special Issues

Topical Collections

Topical Collection in

Econometrics

Econometric Analysis of Climate Change

Collection Editors: Claudio Morana, J. Isaac Miller

.jpg)