Journal Description

Journal of Imaging

Journal of Imaging

is an international, multi/interdisciplinary, peer-reviewed, open access journal of imaging techniques published online monthly by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), PubMed, PMC, dblp, Inspec, Ei Compendex, and other databases.

- Journal Rank: CiteScore - Q2 (Computer Graphics and Computer-Aided Design)

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 21.7 days after submission; acceptance to publication is undertaken in 3.8 days (median values for papers published in this journal in the second half of 2023).

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

Impact Factor:

3.2 (2022);

5-Year Impact Factor:

3.2 (2022)

Latest Articles

Decomposition Technique for Bio-Transmittance Imaging Based on Attenuation Coefficient Matrix Inverse

J. Imaging 2024, 10(1), 22; https://doi.org/10.3390/jimaging10010022 - 15 Jan 2024

Abstract

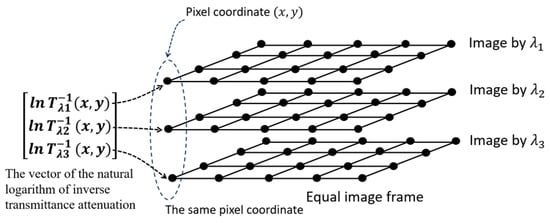

Human body tissue disease diagnosis will become more accurate if transmittance images, such as X-ray images, are separated according to each constituent tissue. This research proposes a new image decomposition technique based on the matrix inverse method for biological tissue images. The fundamental

[...] Read more.

Human body tissue disease diagnosis will become more accurate if transmittance images, such as X-ray images, are separated according to each constituent tissue. This research proposes a new image decomposition technique based on the matrix inverse method for biological tissue images. The fundamental idea of this research is based on the fact that when k different monochromatic lights penetrate a biological tissue, they will experience different attenuation coefficients. Furthermore, the same happens when monochromatic light penetrates k different biological tissues, as they will also experience different attenuation coefficients. The various attenuation coefficients are arranged into a unique

(This article belongs to the Topic Applications in Image Analysis and Pattern Recognition)

►

Show Figures

Open AccessArticle

ControlFace: Feature Disentangling for Controllable Face Swapping

J. Imaging 2024, 10(1), 21; https://doi.org/10.3390/jimaging10010021 - 11 Jan 2024

Abstract

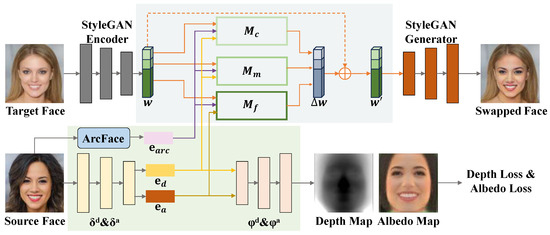

Face swapping is an intriguing and intricate task in the field of computer vision. Currently, most mainstream face swapping methods employ face recognition models to extract identity features and inject them into the generation process. Nonetheless, such methods often struggle to effectively transfer

[...] Read more.

Face swapping is an intriguing and intricate task in the field of computer vision. Currently, most mainstream face swapping methods employ face recognition models to extract identity features and inject them into the generation process. Nonetheless, such methods often struggle to effectively transfer identity information, which leads to generated results failing to achieve a high identity similarity to the source face. Furthermore, if we can accurately disentangle identity information, we can achieve controllable face swapping, thereby providing more choices to users. In pursuit of this goal, we propose a new face swapping framework (ControlFace) based on the disentanglement of identity information. We disentangle the structure and texture of the source face, encoding and characterizing them in the form of feature embeddings separately. According to the semantic level of each feature representation, we inject them into the corresponding feature mapper and fuse them adequately in the latent space of StyleGAN. Owing to such disentanglement of structure and texture, we are able to controllably transfer parts of the identity features. Extensive experiments and comparisons with state-of-the-art face swapping methods demonstrate the superiority of our face swapping framework in terms of transferring identity information, producing high-quality face images, and controllable face swapping.

Full article

(This article belongs to the Section Image and Video Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

Improved Loss Function for Mass Segmentation in Mammography Images Using Density and Mass Size

J. Imaging 2024, 10(1), 20; https://doi.org/10.3390/jimaging10010020 - 09 Jan 2024

Abstract

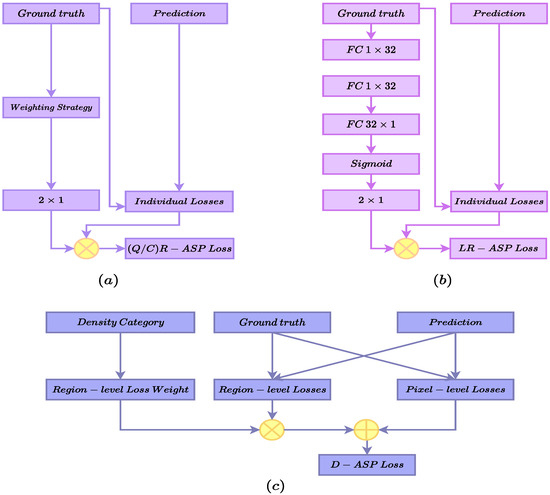

Mass segmentation is one of the fundamental tasks used when identifying breast cancer due to the comprehensive information it provides, including the location, size, and border of the masses. Despite significant improvement in the performance of the task, certain properties of the data,

[...] Read more.

Mass segmentation is one of the fundamental tasks used when identifying breast cancer due to the comprehensive information it provides, including the location, size, and border of the masses. Despite significant improvement in the performance of the task, certain properties of the data, such as pixel class imbalance and the diverse appearance and sizes of masses, remain challenging. Recently, there has been a surge in articles proposing to address pixel class imbalance through the formulation of the loss function. While demonstrating an enhancement in performance, they mostly fail to address the problem comprehensively. In this paper, we propose a new perspective on the calculation of the loss that enables the binary segmentation loss to incorporate the sample-level information and region-level losses in a hybrid loss setting. We propose two variations of the loss to include mass size and density in the loss calculation. Also, we introduce a single loss variant using the idea of utilizing mass size and density to enhance focal loss. We tested the proposed method on benchmark datasets: CBIS-DDSM and INbreast. Our approach outperformed the baseline and state-of-the-art methods on both datasets.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

Classification of Cocoa Beans by Analyzing Spectral Measurements Using Machine Learning and Genetic Algorithm

J. Imaging 2024, 10(1), 19; https://doi.org/10.3390/jimaging10010019 - 08 Jan 2024

Abstract

The quality of cocoa beans is crucial in influencing the taste, aroma, and texture of chocolate and consumer satisfaction. High-quality cocoa beans are valued on the international market, benefiting Ivorian producers. Our study uses advanced techniques to evaluate and classify cocoa beans by

[...] Read more.

The quality of cocoa beans is crucial in influencing the taste, aroma, and texture of chocolate and consumer satisfaction. High-quality cocoa beans are valued on the international market, benefiting Ivorian producers. Our study uses advanced techniques to evaluate and classify cocoa beans by analyzing spectral measurements, integrating machine learning algorithms, and optimizing parameters through genetic algorithms. The results highlight the critical importance of parameter optimization for optimal performance. Logistic regression, support vector machines (SVM), and random forest algorithms demonstrate a consistent performance. XGBoost shows improvements in the second generation, followed by a slight decrease in the fifth. On the other hand, the performance of AdaBoost is not satisfactory in generations two and five. The results are presented on three levels: first, using all parameters reveals that logistic regression obtains the best performance with a precision of 83.78%. Then, the results of the parameters selected in the second generation still show the logistic regression with the best precision of 84.71%. Finally, the results of the parameters chosen in the second generation place random forest in the lead with a score of 74.12%.

Full article

(This article belongs to the Special Issue Multi-Spectral and Color Imaging: Theory and Application)

►▼

Show Figures

Figure 1

Open AccessReview

Advancements and Challenges in Handwritten Text Recognition: A Comprehensive Survey

J. Imaging 2024, 10(1), 18; https://doi.org/10.3390/jimaging10010018 - 08 Jan 2024

Abstract

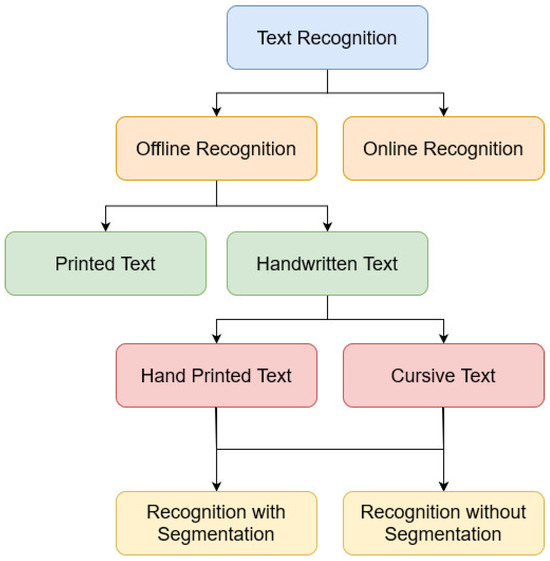

Handwritten Text Recognition (HTR) is essential for digitizing historical documents in different kinds of archives. In this study, we introduce a hybrid form archive written in French: the Belfort civil registers of births. The digitization of these historical documents is challenging due to

[...] Read more.

Handwritten Text Recognition (HTR) is essential for digitizing historical documents in different kinds of archives. In this study, we introduce a hybrid form archive written in French: the Belfort civil registers of births. The digitization of these historical documents is challenging due to their unique characteristics such as writing style variations, overlapped characters and words, and marginal annotations. The objective of this survey paper is to summarize research on handwritten text documents and provide research directions toward effectively transcribing this French dataset. To achieve this goal, we presented a brief survey of several modern and historical HTR offline systems of different international languages, and the top state-of-the-art contributions reported of the French language specifically. The survey classifies the HTR systems based on techniques employed, datasets used, publication years, and the level of recognition. Furthermore, an analysis of the systems’ accuracies is presented, highlighting the best-performing approach. We have also showcased the performance of some HTR commercial systems. In addition, this paper presents a summarization of the HTR datasets that publicly available, especially those identified as benchmark datasets in the International Conference on Document Analysis and Recognition (ICDAR) and the International Conference on Frontiers in Handwriting Recognition (ICFHR) competitions. This paper, therefore, presents updated state-of-the-art research in HTR and highlights new directions in the research field.

Full article

(This article belongs to the Section Computer Vision and Pattern Recognition)

►▼

Show Figures

Figure 1

Open AccessReview

The Critical Photographic Variables Contributing to Skull-Face Superimposition Methods to Assist Forensic Identification of Skeletons: A Review

by

and

J. Imaging 2024, 10(1), 17; https://doi.org/10.3390/jimaging10010017 - 07 Jan 2024

Abstract

►▼

Show Figures

When an unidentified skeleton is discovered, a video superimposition (VS) of the skull and a facial photograph may be undertaken to assist identification. In the first instance, the method is fundamentally a photographic one, requiring the overlay of two 2D photographic images at

[...] Read more.

When an unidentified skeleton is discovered, a video superimposition (VS) of the skull and a facial photograph may be undertaken to assist identification. In the first instance, the method is fundamentally a photographic one, requiring the overlay of two 2D photographic images at transparency for comparison. Presently, mathematical and anatomical techniques used to compare skull/face anatomy dominate superimposition discussions, however, little attention has been paid to the equally fundamental photographic prerequisites that underpin these methods. This predisposes error, as the optical parameters of the two comparison photographs are (presently) rarely matched prior to, or for, comparison. In this paper, we: (1) review the basic but critical photographic prerequisites that apply to VS; (2) propose a replacement for the current anatomy-centric searches for the correct ‘skull pose’ with a photographic-centric camera vantage point search; and (3) demarcate superimposition as a clear two-stage phased procedure that depends first on photographic parameter matching, as a prerequisite to undertaking any anatomical comparison(s).

Full article

Figure 1

Open AccessArticle

Combining Synthetic Images and Deep Active Learning: Data-Efficient Training of an Industrial Object Detection Model

by

and

J. Imaging 2024, 10(1), 16; https://doi.org/10.3390/jimaging10010016 - 06 Jan 2024

Abstract

►▼

Show Figures

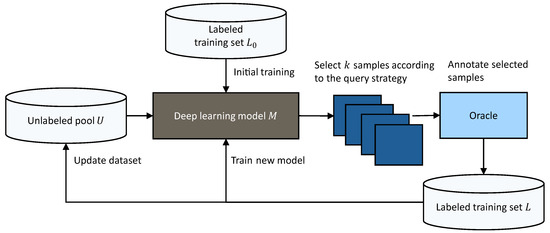

Generating synthetic data is a promising solution to the challenge of limited training data for industrial deep learning applications. However, training on synthetic data and testing on real-world data creates a sim-to-real domain gap. Research has shown that the combination of synthetic and

[...] Read more.

Generating synthetic data is a promising solution to the challenge of limited training data for industrial deep learning applications. However, training on synthetic data and testing on real-world data creates a sim-to-real domain gap. Research has shown that the combination of synthetic and real images leads to better results than those that are generated using only one source of data. In this work, the generation of synthetic training images via physics-based rendering is combined with deep active learning for an industrial object detection task to iteratively improve model performance over time. Our experimental results show that synthetic images improve model performance, especially at the beginning of the model’s life cycle with limited training data. Furthermore, our implemented hybrid query strategy selects diverse and informative new training images in each active learning cycle, which outperforms random sampling. In conclusion, this work presents a workflow to train and iteratively improve object detection models with a small number of real-world images, leading to data-efficient and cost-effective computer vision models.

Full article

Figure 1

Open AccessReview

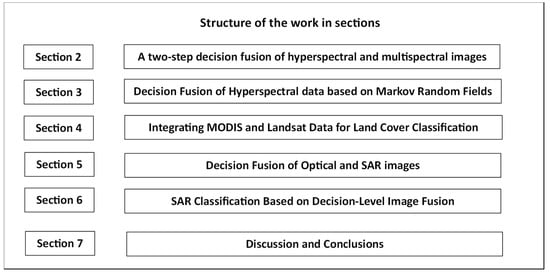

Decision Fusion at Pixel Level of Multi-Band Data for Land Cover Classification—A Review

J. Imaging 2024, 10(1), 15; https://doi.org/10.3390/jimaging10010015 - 05 Jan 2024

Abstract

According to existing signatures for various kinds of land cover coming from different spectral bands, i.e., optical, thermal infrared and PolSAR, it is possible to infer about the land cover type having a single decision from each of the spectral bands. Fusing these

[...] Read more.

According to existing signatures for various kinds of land cover coming from different spectral bands, i.e., optical, thermal infrared and PolSAR, it is possible to infer about the land cover type having a single decision from each of the spectral bands. Fusing these decisions, it is possible to radically improve the reliability of the decision regarding each pixel, taking into consideration the correlation of the individual decisions of the specific pixel as well as additional information transferred from the pixels’ neighborhood. Different remotely sensed data contribute their own information regarding the characteristics of the materials lying in each separate pixel. Hyperspectral and multispectral images give analytic information regarding the reflectance of each pixel in a very detailed manner. Thermal infrared images give valuable information regarding the temperature of the surface covered by each pixel, which is very important for recording thermal locations in urban regions. Finally, SAR data provide structural and electrical characteristics of each pixel. Combining information from some of these sources further improves the capability for reliable categorization of each pixel. The necessary mathematical background regarding pixel-based classification and decision fusion methods is analytically presented.

Full article

(This article belongs to the Special Issue Image Processing and Computer Vision: Algorithms and Applications)

►▼

Show Figures

Figure 1

Open AccessArticle

Forest Disturbance Monitoring Using Cloud-Based Sentinel-2 Satellite Imagery and Machine Learning

by

and

J. Imaging 2024, 10(1), 14; https://doi.org/10.3390/jimaging10010014 - 05 Jan 2024

Abstract

Forest damage has become more frequent in Hungary in the last decades, and remote sensing offers a powerful tool for monitoring them rapidly and cost-effectively. A combined approach was developed to utilise high-resolution ESA Sentinel-2 satellite imagery and Google Earth Engine cloud computing

[...] Read more.

Forest damage has become more frequent in Hungary in the last decades, and remote sensing offers a powerful tool for monitoring them rapidly and cost-effectively. A combined approach was developed to utilise high-resolution ESA Sentinel-2 satellite imagery and Google Earth Engine cloud computing and field-based forest inventory data. Maps and charts were derived from vegetation indices (NDVI and Z∙NDVI) of satellite images to detect forest disturbances in the Hungarian study site for the period of 2017–2020. The NDVI maps were classified to reveal forest disturbances, and the cloud-based method successfully showed drought and frost damage in the oak-dominated Nagyerdő forest of Debrecen. Differences in the reactions to damage between tree species were visible on the index maps; therefore, a random forest machine learning classifier was applied to show the spatial distribution of dominant species. An accuracy assessment was accomplished with confusion matrices that compared classified index maps to field-surveyed data, demonstrating 99.1% producer, 71% user, and 71% total accuracies for forest damage and 81.9% for tree species. Based on the results of this study and the resilience of Google Earth Engine, the presented method has the potential to be extended to monitor all of Hungary in a faster, more accurate way using systematically collected field-data, the latest satellite imagery, and artificial intelligence.

Full article

(This article belongs to the Special Issue Object Detection in Remote Sensing Images: Progress and Challenges)

►▼

Show Figures

Figure 1

Open AccessArticle

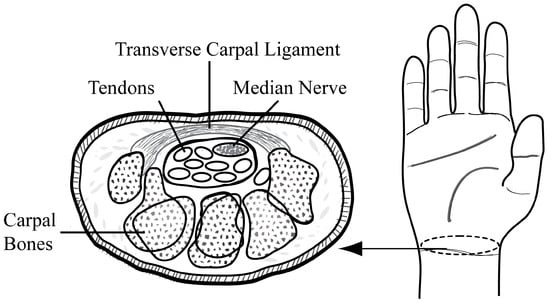

Convolutional Neural Network Approaches in Median Nerve Morphological Assessment from Ultrasound Images

by

and

J. Imaging 2024, 10(1), 13; https://doi.org/10.3390/jimaging10010013 - 05 Jan 2024

Abstract

Ultrasound imaging has been used to investigate compression of the median nerve in carpal tunnel syndrome patients. Ultrasound imaging and the extraction of median nerve parameters from ultrasound images are crucial and are usually performed manually by experts. The manual annotation of ultrasound

[...] Read more.

Ultrasound imaging has been used to investigate compression of the median nerve in carpal tunnel syndrome patients. Ultrasound imaging and the extraction of median nerve parameters from ultrasound images are crucial and are usually performed manually by experts. The manual annotation of ultrasound images relies on experience, and intra- and interrater reliability may vary among studies. In this study, two types of convolutional neural networks (CNNs), U-Net and SegNet, were used to extract the median nerve morphology. To the best of our knowledge, the application of these methods to ultrasound imaging of the median nerve has not yet been investigated. Spearman’s correlation and Bland–Altman analyses were performed to investigate the correlation and agreement between manual annotation and CNN estimation, namely, the cross-sectional area, circumference, and diameter of the median nerve. The results showed that the intersection over union (IoU) of U-Net (0.717) was greater than that of SegNet (0.625). A few images in SegNet had an IoU below 0.6, decreasing the average IoU. In both models, the IoU decreased when the median nerve was elongated longitudinally with a blurred outline. The Bland–Altman analysis revealed that, in general, both the U-Net- and SegNet-estimated measurements showed 95% limits of agreement with manual annotation. These results show that these CNN models are promising tools for median nerve ultrasound imaging analysis.

Full article

(This article belongs to the Special Issue Application of Machine Learning Using Ultrasound Images, Volume II)

►▼

Show Figures

Figure 1

Open AccessArticle

Multispectral Deep Neural Network Fusion Method for Low-Light Object Detection

J. Imaging 2024, 10(1), 12; https://doi.org/10.3390/jimaging10010012 - 31 Dec 2023

Abstract

Despite significant strides in achieving vehicle autonomy, robust perception under low-light conditions still remains a persistent challenge. In this study, we investigate the potential of multispectral imaging, thereby leveraging deep learning models to enhance object detection performance in the context of nighttime driving.

[...] Read more.

Despite significant strides in achieving vehicle autonomy, robust perception under low-light conditions still remains a persistent challenge. In this study, we investigate the potential of multispectral imaging, thereby leveraging deep learning models to enhance object detection performance in the context of nighttime driving. Features encoded from the red, green, and blue (RGB) visual spectrum and thermal infrared images are combined to implement a multispectral object detection model. This has proven to be more effective compared to using visual channels only, as thermal images provide complementary information when discriminating objects in low-illumination conditions. Additionally, there is a lack of studies on effectively fusing these two modalities for optimal object detection performance. In this work, we present a framework based on the Faster R-CNN architecture with a feature pyramid network. Moreover, we design various fusion approaches using concatenation and addition operators at varying stages of the network to analyze their impact on object detection performance. Our experimental results on the KAIST and FLIR datasets show that our framework outperforms the baseline experiments of the unimodal input source and the existing multispectral object detectors.

Full article

(This article belongs to the Special Issue Computer Vision and Deep Learning: Trends and Applications (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

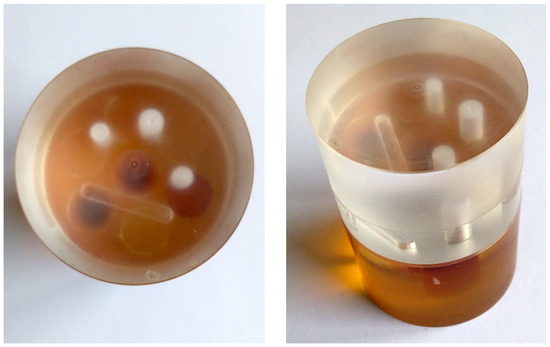

Simulation-Assisted Augmentation of Missing Wedge and Region-of-Interest Computed Tomography Data

J. Imaging 2024, 10(1), 11; https://doi.org/10.3390/jimaging10010011 - 29 Dec 2023

Abstract

This study reports a strategy to use sophisticated, realistic X-ray Computed Tomography (CT) simulations to reduce Missing Wedge (MW) and Region-of-Interest (RoI) artifacts in FBP (Filtered Back-Projection) reconstructions. A 3D model of the object is used to simulate the projections that include the

[...] Read more.

This study reports a strategy to use sophisticated, realistic X-ray Computed Tomography (CT) simulations to reduce Missing Wedge (MW) and Region-of-Interest (RoI) artifacts in FBP (Filtered Back-Projection) reconstructions. A 3D model of the object is used to simulate the projections that include the missing information inside the MW and outside the RoI. Such information augments the experimental projections, thereby drastically improving the reconstruction results. An X-ray CT dataset of a selected object is modified to mimic various degrees of RoI and MW problems. The results are evaluated in comparison to a standard FBP reconstruction of the complete dataset. In all cases, the reconstruction quality is significantly improved. Small inclusions present in the scanned object are better localized and quantified. The proposed method has the potential to improve the results of any CT reconstruction algorithm.

Full article

(This article belongs to the Topic Research on the Application of Digital Signal Processing)

►▼

Show Figures

Figure 1

Open AccessReview

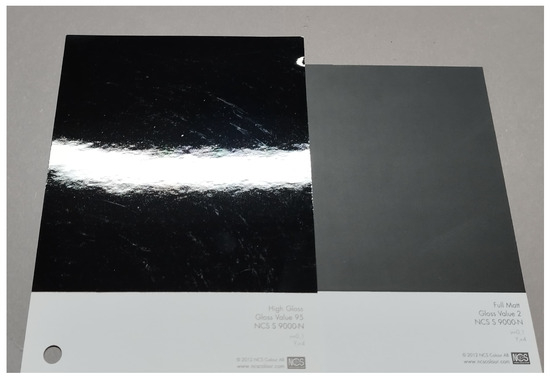

Visually Significant Dimensions and Parameters for Gloss

by

and

J. Imaging 2024, 10(1), 10; https://doi.org/10.3390/jimaging10010010 - 29 Dec 2023

Abstract

The appearance of a surface depends on four main appearance attributes, namely color, gloss, texture, and translucency. Gloss is an important attribute that people use to understand surface appearance, right after color. In the past decades, extensive research has been conducted in the

[...] Read more.

The appearance of a surface depends on four main appearance attributes, namely color, gloss, texture, and translucency. Gloss is an important attribute that people use to understand surface appearance, right after color. In the past decades, extensive research has been conducted in the field of gloss and gloss perception, with different aims to understand the complex nature of gloss appearance. This paper reviews the research conducted on the topic of gloss and gloss perception and discusses the results and potential future research on gloss and gloss perception. Our primary focus in this review is on research in the field of gloss and the setup of associated psychophysical experiments. However, due to the industrial and application-oriented nature of this review, the primary focus is the gloss of dielectric materials, a critical aspect in various industries. This review not only summarizes the existing research but also highlights potential avenues for future research in the pursuit of a more comprehensive understanding of gloss perception.

Full article

(This article belongs to the Special Issue Imaging Technologies for Understanding Material Appearance)

►▼

Show Figures

Figure 1

Open AccessArticle

Upper First and Second Molar Pulp Chamber Endodontic Anatomy Evaluation According to a Recent Classification: A Cone Beam Computed Tomography Study

by

, , , , , , , , and

J. Imaging 2024, 10(1), 9; https://doi.org/10.3390/jimaging10010009 - 28 Dec 2023

Abstract

(1) The possibility of knowing information about the anatomy in advance, in particular the arrangement of the endodontic system, is crucial for successful treatment and for avoiding complications during endodontic therapy; the aim was to find a correlation between a minimally invasive and

[...] Read more.

(1) The possibility of knowing information about the anatomy in advance, in particular the arrangement of the endodontic system, is crucial for successful treatment and for avoiding complications during endodontic therapy; the aim was to find a correlation between a minimally invasive and less stressful endodontic access on Ni-Ti rotary instruments, but which allows correct vision and identification of anatomical reference points, simplifying the typologies based on the shape of the pulp chamber in coronal three-dimensional exam views. (2) Based on the inclusion criteria, 104 maxillary molars (52 maxillary first molars and 52 maxillary second molars) were included in the study after 26 Cone Beam Computed Tomography (CBCT) acquisitions (from 15 males and 11 females). And linear measurements were taken with the CBCT-dedicated software for subsequent analysis. (3) The results of the present study show data similar to those already published about this topic. Pawar and Singh’s simplified classification actually seems to offer a schematic way of classification that includes almost all of the cases that have been analyzed. (4) The use of a diagnostic examination with a wide Field of View (FOV) and low radiation dose represents an exam capable of obtaining a lot of clinical information for endodontic treatment. Nevertheless, the endodontic anatomy of the upper second molar represents a major challenge for the clinician due to its complexity both in canal shape and in ramification.

Full article

(This article belongs to the Section Medical Imaging)

►▼

Show Figures

Figure 1

Open AccessArticle

A Robust Machine Learning Model for Diabetic Retinopathy Classification

J. Imaging 2024, 10(1), 8; https://doi.org/10.3390/jimaging10010008 - 28 Dec 2023

Abstract

Ensemble learning is a process that belongs to the artificial intelligence (AI) field. It helps to choose a robust machine learning (ML) model, usually used for data classification. AI has a large connection with image processing and feature classification, and it can also

[...] Read more.

Ensemble learning is a process that belongs to the artificial intelligence (AI) field. It helps to choose a robust machine learning (ML) model, usually used for data classification. AI has a large connection with image processing and feature classification, and it can also be successfully applied to analyzing fundus eye images. Diabetic retinopathy (DR) is a disease that can cause vision loss and blindness, which, from an imaging point of view, can be shown when screening the eyes. Image processing tools can analyze and extract the features from fundus eye images, and these corroborate with ML classifiers that can perform their classification among different disease classes. The outcomes integrated into automated diagnostic systems can be a real success for physicians and patients. In this study, in the form image processing area, the manipulation of the contrast with the gamma correction parameter was applied because DR affects the blood vessels, and the structure of the eyes becomes disorderly. Therefore, the analysis of the texture with two types of entropies was necessary. Shannon and fuzzy entropies and contrast manipulation led to ten original features used in the classification process. The machine learning library PyCaret performs complex tasks, and the empirical process shows that of the fifteen classifiers, the gradient boosting classifier (GBC) provides the best results. Indeed, the proposed model can classify the DR degrees as normal or severe, achieving an accuracy of 0.929, an F1 score of 0.902, and an area under the curve (AUC) of 0.941. The validation of the selected model with a bootstrap statistical technique was performed. The novelty of the study consists of the extraction of features from preprocessed fundus eye images, their classification, and the manipulation of the contrast in a controlled way.

Full article

(This article belongs to the Topic Applications in Image Analysis and Pattern Recognition)

►▼

Show Figures

Figure 1

Open AccessArticle

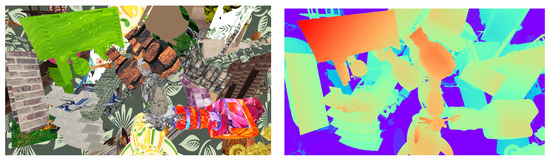

Fast Data Generation for Training Deep-Learning 3D Reconstruction Approaches for Camera Arrays

J. Imaging 2024, 10(1), 7; https://doi.org/10.3390/jimaging10010007 - 27 Dec 2023

Abstract

In the last decade, many neural network algorithms have been proposed to solve depth reconstruction. Our focus is on reconstruction from images captured by multi-camera arrays which are a grid of vertically and horizontally aligned cameras that are uniformly spaced. Training these networks

[...] Read more.

In the last decade, many neural network algorithms have been proposed to solve depth reconstruction. Our focus is on reconstruction from images captured by multi-camera arrays which are a grid of vertically and horizontally aligned cameras that are uniformly spaced. Training these networks using supervised learning requires data with ground truth. Existing datasets are simulating specific configurations. For example, they represent a fixed-size camera array or a fixed space between cameras. When the distance between cameras is small, the array is said to be with a short baseline. Light-field cameras, with a baseline of less than a centimeter, are for instance in this category. On the contrary, an array with large space between cameras is said to be of a wide baseline. In this paper, we present a purely virtual data generator to create large training datasets: this generator can adapt to any camera array configuration. Parameters are for instance the size (number of cameras) and the distance between two cameras. The generator creates virtual scenes by randomly selecting objects and textures and following user-defined parameters like the disparity range or image parameters (resolution, color space). Generated data are used only for the learning phase. They are unrealistic but can present concrete challenges for disparity reconstruction such as thin elements and the random assignment of textures to objects to avoid color bias. Our experiments focus on wide-baseline configuration which requires more datasets. We validate the generator by testing the generated datasets with known deep-learning approaches as well as depth reconstruction algorithms in order to validate them. The validation experiments have proven successful.

Full article

(This article belongs to the Special Issue Geometry Reconstruction from Images (2nd Edition))

►▼

Show Figures

Figure 1

Open AccessArticle

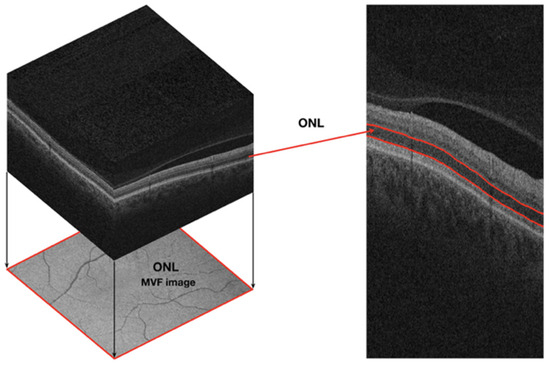

When Sex Matters: Differences in the Central Nervous System as Imaged by OCT through the Retina

by

, , , , and

J. Imaging 2024, 10(1), 6; https://doi.org/10.3390/jimaging10010006 - 25 Dec 2023

Abstract

Background: Retinal texture has gained momentum as a source of biomarkers of neurodegeneration, as it is sensitive to subtle differences in the central nervous system from texture analysis of the neuroretina. Sex differences in the retina structure, as detected by layer thickness measurements

[...] Read more.

Background: Retinal texture has gained momentum as a source of biomarkers of neurodegeneration, as it is sensitive to subtle differences in the central nervous system from texture analysis of the neuroretina. Sex differences in the retina structure, as detected by layer thickness measurements from optical coherence tomography (OCT) data, have been discussed in the literature. However, the effect of sex on retinal interocular differences in healthy adults has been overlooked and remains largely unreported. Methods: We computed mean value fundus images for the neuroretina layers as imaged by OCT of healthy individuals. Texture metrics were obtained from these images to assess whether women and men have the same retina texture characteristics in both eyes. Texture features were tested for group mean differences between the right and left eye. Results: Corrected texture differences exist only in the female group. Conclusions: This work illustrates that the differences between the right and left eyes manifest differently in females and males. This further supports the need for tight control and minute analysis in studies where interocular asymmetry may be used as a disease biomarker, and the potential of texture analysis applied to OCT imaging to spot differences in the retina.

Full article

(This article belongs to the Special Issue Advances in Retinal Image Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

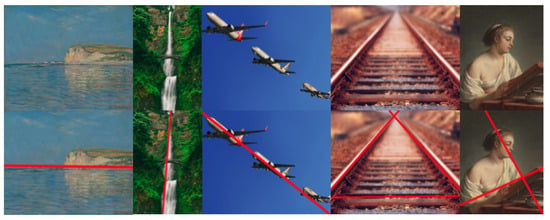

Reconstructing Image Composition: Computation of Leading Lines

J. Imaging 2024, 10(1), 5; https://doi.org/10.3390/jimaging10010005 - 25 Dec 2023

Abstract

►▼

Show Figures

The composition of an image is a critical element chosen by the author to construct an image that conveys a narrative and related emotions. Other key elements include framing, lighting, and colors. Assessing classical and simple composition rules in an image, such as

[...] Read more.

The composition of an image is a critical element chosen by the author to construct an image that conveys a narrative and related emotions. Other key elements include framing, lighting, and colors. Assessing classical and simple composition rules in an image, such as the well-known “rule of thirds”, has proven effective in evaluating the aesthetic quality of an image. It is widely acknowledged that composition is emphasized by the presence of leading lines. While these leading lines may not be explicitly visible in the image, they connect key points within the image and can also serve as boundaries between different areas of the image. For instance, the boundary between the sky and the ground can be considered a leading line in the image. Making the image’s composition explicit through a set of leading lines is valuable when analyzing an image or assisting in photography. To the best of our knowledge, no computational method has been proposed to trace image leading lines. We conducted user studies to assess the agreement among image experts when requesting them to draw leading lines on images. According to these studies, which demonstrate that experts concur in identifying leading lines, this paper introduces a fully automatic computational method for recovering the leading lines that underlie the image’s composition. Our method consists of two steps: firstly, based on feature detection, potential weighted leading lines are established; secondly, these weighted leading lines are grouped to generate the leading lines of the image. We evaluate our method through both subjective and objective studies, and we propose an objective metric to compare two sets of leading lines.

Full article

Figure 1

Open AccessArticle

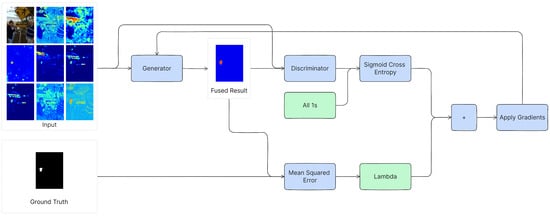

Harmonizing Image Forgery Detection & Localization: Fusion of Complementary Approaches

J. Imaging 2024, 10(1), 4; https://doi.org/10.3390/jimaging10010004 - 25 Dec 2023

Abstract

Image manipulation is easier than ever, often facilitated using accessible AI-based tools. This poses significant risks when used to disseminate disinformation, false evidence, or fraud, which highlights the need for image forgery detection and localization methods to combat this issue. While some recent

[...] Read more.

Image manipulation is easier than ever, often facilitated using accessible AI-based tools. This poses significant risks when used to disseminate disinformation, false evidence, or fraud, which highlights the need for image forgery detection and localization methods to combat this issue. While some recent detection methods demonstrate good performance, there is still a significant gap to be closed to consistently and accurately detect image manipulations in the wild. This paper aims to enhance forgery detection and localization by combining existing detection methods that complement each other. First, we analyze these methods’ complementarity, with an objective measurement of complementariness, and calculation of a target performance value using a theoretical oracle fusion. Then, we propose a novel fusion method that combines the existing methods’ outputs. The proposed fusion method is trained using a Generative Adversarial Network architecture. Our experiments demonstrate improved detection and localization performance on a variety of datasets. Although our fusion method is hindered by a lack of generalization, this is a common problem in supervised learning, and hence a motivation for future work. In conclusion, this work deepens our understanding of forgery detection methods’ complementariness and how to harmonize them. As such, we contribute to better protection against image manipulations and the battle against disinformation.

Full article

(This article belongs to the Special Issue Robust Deep Learning Techniques for Multimedia Forensics and Security)

►▼

Show Figures

Figure 1

Open AccessArticle

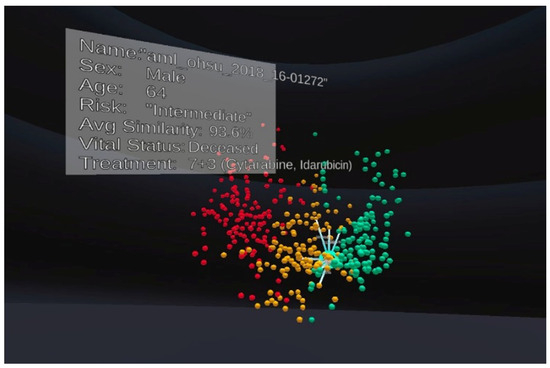

Exocentric and Egocentric Views for Biomedical Data Analytics in Virtual Environments—A Usability Study

by

, , , , , and

J. Imaging 2024, 10(1), 3; https://doi.org/10.3390/jimaging10010003 - 23 Dec 2023

Abstract

Biomedical datasets are usually large and complex, containing biological information about a disease. Computational analytics and the interactive visualisation of such data are essential decision-making tools for disease diagnosis and treatment. Oncology data models were observed in a virtual reality environment to analyse

[...] Read more.

Biomedical datasets are usually large and complex, containing biological information about a disease. Computational analytics and the interactive visualisation of such data are essential decision-making tools for disease diagnosis and treatment. Oncology data models were observed in a virtual reality environment to analyse gene expression and clinical data from a cohort of cancer patients. The technology enables a new way to view information from the outside in (exocentric view) and the inside out (egocentric view), which is otherwise not possible on ordinary displays. This paper presents a usability study on the exocentric and egocentric views of biomedical data visualisation in virtual reality and their impact on usability on human behaviour and perception. Our study revealed that the performance time was faster in the exocentric view than in the egocentric view. The exocentric view also received higher ease-of-use scores than the egocentric view. However, the influence of usability on time performance was only evident in the egocentric view. The findings of this study could be used to guide future development and refinement of visualisation tools in virtual reality.

Full article

(This article belongs to the Section Mixed, Augmented and Virtual Reality)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Sensors, J. Imaging, Electronics, Applied Sciences, Entropy, Digital, J. Intell.

Advances in Perceptual Quality Assessment of User Generated Contents

Topic Editors: Guangtao Zhai, Xiongkuo Min, Menghan Hu, Wei ZhouDeadline: 31 March 2024

Topic in

Algorithms, Diagnostics, Entropy, Information, J. Imaging

Application of Machine Learning in Molecular Imaging

Topic Editors: Allegra Conti, Nicola Toschi, Marianna Inglese, Andrea Duggento, Matthew Grech-Sollars, Serena Monti, Giancarlo Sportelli, Pietro CarraDeadline: 31 May 2024

Topic in

Applied Sciences, Computation, Entropy, J. Imaging

Color Image Processing: Models and Methods (CIP: MM)

Topic Editors: Giuliana Ramella, Isabella TorcicolloDeadline: 30 July 2024

Topic in

Applied Sciences, Sensors, J. Imaging, MAKE

Applications in Image Analysis and Pattern Recognition

Topic Editors: Bin Fan, Wenqi RenDeadline: 31 August 2024

Conferences

Special Issues

Special Issue in

J. Imaging

Machine Learning for Human Activity Recognition

Guest Editor: Hojjat SalehinejadDeadline: 31 January 2024

Special Issue in

J. Imaging

Fluorescence Imaging and Analysis of Cellular System

Guest Editor: Ashutosh SharmaDeadline: 1 March 2024

Special Issue in

J. Imaging

Robust Deep Learning Techniques for Multimedia Forensics and SecurityGuest Editors: Benedetta Tondi, Irene Amerini, Andrea Costanzo, Minoru KuribayashiDeadline: 16 March 2024

Special Issue in

J. Imaging

Recent Advances in Image-Based Geotechnics II

Guest Editor: Joana FonsecaDeadline: 31 March 2024