-

Identifying the Regions of a Space with the Self-Parameterized Recursively Assessed Decomposition Algorithm (SPRADA)

Identifying the Regions of a Space with the Self-Parameterized Recursively Assessed Decomposition Algorithm (SPRADA) -

Deep Learning Techniques for Radar-Based Continuous Human Activity Recognition

Deep Learning Techniques for Radar-Based Continuous Human Activity Recognition -

Analyzing Quality Measurements for Dimensionality Reduction

Analyzing Quality Measurements for Dimensionality Reduction -

Beyond Weisfeiler–Lehman with Local Ego-Network Encodings

Beyond Weisfeiler–Lehman with Local Ego-Network Encodings

Journal Description

Machine Learning and Knowledge Extraction

Machine Learning and Knowledge Extraction

is an international, peer-reviewed, open access journal on machine learning and applications. It publishes original research articles, reviews, tutorials, research ideas, short notes and Special Issues that focus on machine learning and applications. Please see our video on YouTube explaining the MAKE journal concept. The journal is published quarterly online by MDPI.

- Open Access— free for readers, with article processing charges (APC) paid by authors or their institutions.

- High Visibility: indexed within Scopus, ESCI (Web of Science), dblp, and other databases.

- Rapid Publication: manuscripts are peer-reviewed and a first decision is provided to authors approximately 19.9 days after submission; acceptance to publication is undertaken in 3.6 days (median values for papers published in this journal in the second half of 2023).

- Journal Rank: CiteScore - Q1 (Artificial Intelligence)

- Recognition of Reviewers: reviewers who provide timely, thorough peer-review reports receive vouchers entitling them to a discount on the APC of their next publication in any MDPI journal, in appreciation of the work done.

- MAKE is a companion journal of Entropy.

Impact Factor:

3.9 (2022);

5-Year Impact Factor:

4.8 (2022)

Latest Articles

An Ensemble-Based Multi-Classification Machine Learning Classifiers Approach to Detect Multiple Classes of Cyberbullying

Mach. Learn. Knowl. Extr. 2024, 6(1), 156-170; https://doi.org/10.3390/make6010009 - 12 Jan 2024

Abstract

The impact of communication through social media is currently considered a significant social issue. This issue can lead to inappropriate behavior using social media, which is referred to as cyberbullying. Automated systems are capable of efficiently identifying cyberbullying and performing sentiment analysis on

[...] Read more.

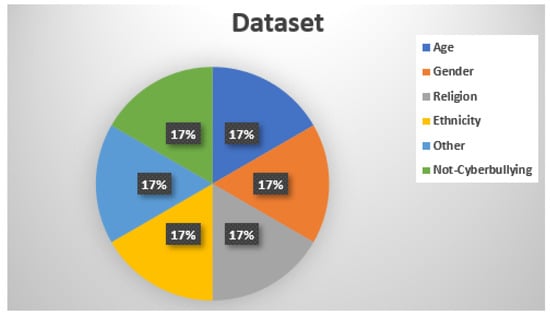

The impact of communication through social media is currently considered a significant social issue. This issue can lead to inappropriate behavior using social media, which is referred to as cyberbullying. Automated systems are capable of efficiently identifying cyberbullying and performing sentiment analysis on social media platforms. This study focuses on enhancing a system to detect six types of cyberbullying tweets. Employing multi-classification algorithms on a cyberbullying dataset, our approach achieved high accuracy, particularly with the TF-IDF (bigram) feature extraction. Our experiment achieved high performance compared with that stated for previous experiments on the same dataset. Two ensemble machine learning methods, employing the N-gram with TF-IDF feature-extraction technique, demonstrated superior performance in classification. Three popular multi-classification algorithms: Decision Trees, Random Forest, and XGBoost, were combined into two varied ensemble methods separately. These ensemble classifiers demonstrated superior performance compared to traditional machine learning classifier models. The stacking classifier reached 90.71% accuracy and the voting classifier 90.44%. The results of the experiments showed that the framework can detect six different types of cyberbullying more efficiently, with an accuracy rate of 0.9071.

Full article

(This article belongs to the Section Privacy)

►

Show Figures

Open AccessArticle

What Do the Regulators Mean? A Taxonomy of Regulatory Principles for the Use of AI in Financial Services

Mach. Learn. Knowl. Extr. 2024, 6(1), 143-155; https://doi.org/10.3390/make6010008 - 11 Jan 2024

Abstract

The intended automation in the financial industry creates a proper area for artificial intelligence usage. However, complex and high regulatory standards and rapid technological developments pose significant challenges in developing and deploying AI-based services in the finance industry. The regulatory principles defined by

[...] Read more.

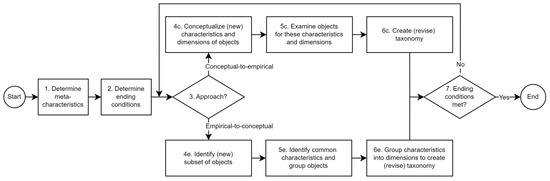

The intended automation in the financial industry creates a proper area for artificial intelligence usage. However, complex and high regulatory standards and rapid technological developments pose significant challenges in developing and deploying AI-based services in the finance industry. The regulatory principles defined by financial authorities in Europe need to be structured in a fine-granular way to promote understanding and ensure customer safety and the quality of AI-based services in the financial industry. This will lead to a better understanding of regulators’ priorities and guide how AI-based services are built. This paper provides a classification pattern with a taxonomy that clarifies the existing European regulatory principles for researchers, regulatory authorities, and financial services companies. Our study can pave the way for developing compliant AI-based services by bringing out the thematic focus of regulatory principles.

Full article

(This article belongs to the Special Issue Fairness and Explanation for Trustworthy AI)

►▼

Show Figures

Figure 1

Open AccessArticle

Knowledge Graph Extraction of Business Interactions from News Text for Business Networking Analysis

by

and

Mach. Learn. Knowl. Extr. 2024, 6(1), 126-142; https://doi.org/10.3390/make6010007 - 07 Jan 2024

Abstract

Network representation of data is key to a variety of fields and their applications including trading and business. A major source of data that can be used to build insightful networks is the abundant amount of unstructured text data available through the web.

[...] Read more.

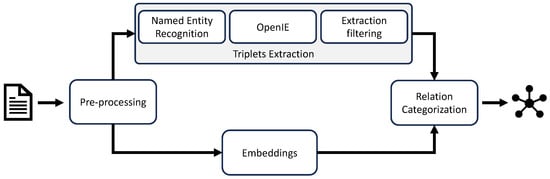

Network representation of data is key to a variety of fields and their applications including trading and business. A major source of data that can be used to build insightful networks is the abundant amount of unstructured text data available through the web. The efforts to turn unstructured text data into a network have spawned different research endeavors, including the simplification of the process. This study presents the design and implementation of TraCER, a pipeline that turns unstructured text data into a graph, targeting the business networking domain. It describes the application of natural language processing techniques used to process the text, as well as the heuristics and learning algorithms that categorize the nodes and the links. The study also presents some simple yet efficient methods for the entity-linking and relation classification steps of the pipeline.

Full article

(This article belongs to the Section Network)

►▼

Show Figures

Figure 1

Open AccessArticle

Predicting Wind Comfort in an Urban Area: A Comparison of a Regression- with a Classification-CNN for General Wind Rose Statistics

by

, , , , , and

Mach. Learn. Knowl. Extr. 2024, 6(1), 98-125; https://doi.org/10.3390/make6010006 - 04 Jan 2024

Abstract

Wind comfort is an important factor when new buildings in existing urban areas are planned. It is common practice to use computational fluid dynamics (CFD) simulations to model wind comfort. These simulations are usually time-consuming, making it impossible to explore a high number

[...] Read more.

Wind comfort is an important factor when new buildings in existing urban areas are planned. It is common practice to use computational fluid dynamics (CFD) simulations to model wind comfort. These simulations are usually time-consuming, making it impossible to explore a high number of different design choices for a new urban development with wind simulations. Data-driven approaches based on simulations have shown great promise, and have recently been used to predict wind comfort in urban areas. These surrogate models could be used in generative design software and would enable the planner to explore a large number of options for a new design. In this paper, we propose a novel machine learning workflow (MLW) for direct wind comfort prediction. The MLW incorporates a regression and a classification U-Net, trained based on CFD simulations. Furthermore, we present an augmentation strategy focusing on generating more training data independent of the underlying wind statistics needed to calculate the wind comfort criterion. We train the models based on different sets of training data and compare the results. All trained models (regression and classification) yield an

(This article belongs to the Topic Applications in Image Analysis and Pattern Recognition)

►▼

Show Figures

Figure 1

Open AccessArticle

A Data Mining Approach for Health Transport Demand

by

, , and

Mach. Learn. Knowl. Extr. 2024, 6(1), 78-97; https://doi.org/10.3390/make6010005 - 04 Jan 2024

Abstract

Efficient planning and management of health transport services are crucial for improving accessibility and enhancing the quality of healthcare. This study focuses on the choice of determinant variables in the prediction of health transport demand using data mining and analysis techniques. Specifically, health

[...] Read more.

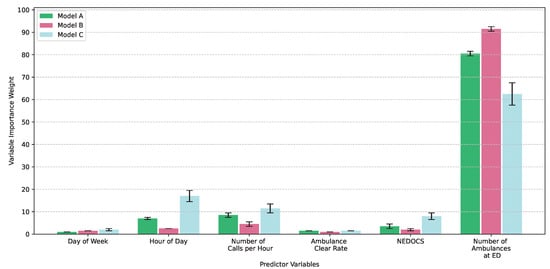

Efficient planning and management of health transport services are crucial for improving accessibility and enhancing the quality of healthcare. This study focuses on the choice of determinant variables in the prediction of health transport demand using data mining and analysis techniques. Specifically, health transport services data from Asturias, spanning a seven-year period, are analyzed with the aim of developing accurate predictive models. The problem at hand requires the handling of large volumes of data and multiple predictor variables, leading to challenges in computational cost and interpretation of the results. Therefore, data mining techniques are applied to identify the most relevant variables in the design of predictive models. This approach allows for reducing the computational cost without sacrificing prediction accuracy. The findings of this study underscore that the selection of significant variables is essential for optimizing medical transport resources and improving the planning of emergency services. With the most relevant variables identified, a balance between prediction accuracy and computational efficiency is achieved. As a result, improved service management is observed to lead to increased accessibility to health services and better resource planning.

Full article

(This article belongs to the Section Data)

►▼

Show Figures

Figure 1

Open AccessArticle

Machine Learning for an Enhanced Credit Risk Analysis: A Comparative Study of Loan Approval Prediction Models Integrating Mental Health Data

by

, , , , , and

Mach. Learn. Knowl. Extr. 2024, 6(1), 53-77; https://doi.org/10.3390/make6010004 - 04 Jan 2024

Abstract

►▼

Show Figures

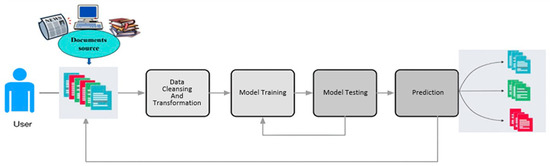

The number of loan requests is rapidly growing worldwide representing a multi-billion-dollar business in the credit approval industry. Large data volumes extracted from the banking transactions that represent customers’ behavior are available, but processing loan applications is a complex and time-consuming task for

[...] Read more.

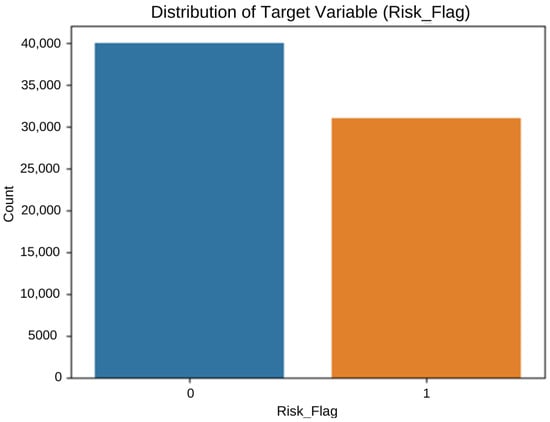

The number of loan requests is rapidly growing worldwide representing a multi-billion-dollar business in the credit approval industry. Large data volumes extracted from the banking transactions that represent customers’ behavior are available, but processing loan applications is a complex and time-consuming task for banking institutions. In 2022, over 20 million Americans had open loans, totaling USD 178 billion in debt, although over 20% of loan applications were rejected. Numerous statistical methods have been deployed to estimate loan risks opening the field to estimate whether machine learning techniques can better predict the potential risks. To study the machine learning paradigm in this sector, the mental health dataset and loan approval dataset presenting survey results from 1991 individuals are used as inputs to experiment with the credit risk prediction ability of the chosen machine learning algorithms. Giving a comprehensive comparative analysis, this paper shows how the chosen machine learning algorithms can distinguish between normal and risky loan customers who might never pay their debts back. The results from the tested algorithms show that XGBoost achieves the highest accuracy of 84% in the first dataset, surpassing gradient boost (83%) and KNN (83%). In the second dataset, random forest achieved the highest accuracy of 85%, followed by decision tree and KNN with 83%. Alongside accuracy, the precision, recall, and overall performance of the algorithms were tested and a confusion matrix analysis was performed producing numerical results that emphasized the superior performance of XGBoost and random forest in the classification tasks in the first dataset, and XGBoost and decision tree in the second dataset. Researchers and practitioners can rely on these findings to form their model selection process and enhance the accuracy and precision of their classification models.

Full article

Figure 1

Open AccessArticle

An Evaluative Baseline for Sentence-Level Semantic Division

by

, , , , , , and

Mach. Learn. Knowl. Extr. 2024, 6(1), 41-52; https://doi.org/10.3390/make6010003 - 02 Jan 2024

Abstract

Semantic folding theory (SFT) is an emerging cognitive science theory that aims to explain how the human brain processes and organizes semantic information. The distribution of text into semantic grids is key to SFT. We propose a sentence-level semantic division baseline with 100

[...] Read more.

Semantic folding theory (SFT) is an emerging cognitive science theory that aims to explain how the human brain processes and organizes semantic information. The distribution of text into semantic grids is key to SFT. We propose a sentence-level semantic division baseline with 100 grids (SSDB-100), the only dataset we are currently aware of that performs a relevant validation of the sentence-level SFT algorithm, to evaluate the validity of text distribution in semantic grids and divide it using classical division algorithms on SSDB-100. In this article, we describe the construction of SSDB-100. First, a semantic division questionnaire with broad coverage was generated by limiting the uncertainty range of the topics and corpus. Subsequently, through an expert survey, 11 human experts provided feedback. Finally, we analyzed and processed the feedback; the average consistency index for the used feedback was 0.856 after eliminating the invalid feedback. SSDB-100 has 100 semantic grids with clear distinctions between the grids, allowing the dataset to be extended using semantic methods.

Full article

(This article belongs to the Section Data)

►▼

Show Figures

Figure 1

Open AccessArticle

Transforming Simulated Data into Experimental Data Using Deep Learning for Vibration-Based Structural Health Monitoring

Mach. Learn. Knowl. Extr. 2024, 6(1), 18-40; https://doi.org/10.3390/make6010002 - 27 Dec 2023

Abstract

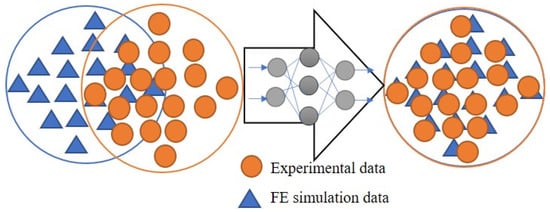

While machine learning (ML) has been quite successful in the field of structural health monitoring (SHM), its practical implementation has been limited. This is because ML model training requires data containing a variety of distinct instances of damage captured from a real structure

[...] Read more.

While machine learning (ML) has been quite successful in the field of structural health monitoring (SHM), its practical implementation has been limited. This is because ML model training requires data containing a variety of distinct instances of damage captured from a real structure and the experimental generation of such data is challenging. One way to tackle this issue is by generating training data through numerical simulations. However, simulated data cannot capture the bias and variance of experimental uncertainty. To overcome this problem, this work proposes a deep-learning-based domain transformation method for transforming simulated data to the experimental domain. Use of this technique has been demonstrated for debonding location and size predictions of stiffened panels using a vibration-based method. The results are satisfactory for both debonding location and size prediction. This domain transformation method can be used in any field in which experimental data for training machine-learning models is scarce.

Full article

(This article belongs to the Collection Extravaganza Feature Papers on Hot Topics in Machine Learning and Knowledge Extraction)

►▼

Show Figures

Figure 1

Open AccessArticle

Autoencoder-Based Visual Anomaly Localization for Manufacturing Quality Control

by

and

Mach. Learn. Knowl. Extr. 2024, 6(1), 1-17; https://doi.org/10.3390/make6010001 - 21 Dec 2023

Abstract

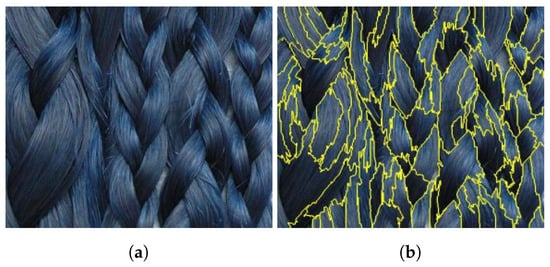

Manufacturing industries require the efficient and voluminous production of high-quality finished goods. In the context of Industry 4.0, visual anomaly detection poses an optimistic solution for automatically controlled product quality with high precision. In general, automation based on computer vision is a promising

[...] Read more.

Manufacturing industries require the efficient and voluminous production of high-quality finished goods. In the context of Industry 4.0, visual anomaly detection poses an optimistic solution for automatically controlled product quality with high precision. In general, automation based on computer vision is a promising solution to prevent bottlenecks at the product quality checkpoint. We considered recent advancements in machine learning to improve visual defect localization, but challenges persist in obtaining a balanced feature set and database of the wide variety of defects occurring in the production line. Hence, this paper proposes a defect localizing autoencoder with unsupervised class selection by clustering with k-means the features extracted from a pretrained VGG16 network. Moreover, the selected classes of defects are augmented with natural wild textures to simulate artificial defects. The study demonstrates the effectiveness of the defect localizing autoencoder with unsupervised class selection for improving defect detection in manufacturing industries. The proposed methodology shows promising results with precise and accurate localization of quality defects on melamine-faced boards for the furniture industry. Incorporating artificial defects into the training data shows significant potential for practical implementation in real-world quality control scenarios.

Full article

(This article belongs to the Topic Applied Computer Vision and Pattern Recognition: 2nd Volume)

►▼

Show Figures

Figure 1

Open AccessArticle

Statistical Analysis of Imbalanced Classification with Training Size Variation and Subsampling on Datasets of Research Papers in Biomedical Literature

by

and

Mach. Learn. Knowl. Extr. 2023, 5(4), 1953-1978; https://doi.org/10.3390/make5040095 - 11 Dec 2023

Abstract

The overall purpose of this paper is to demonstrate how data preprocessing, training size variation, and subsampling can dynamically change the performance metrics of imbalanced text classification. The methodology encompasses using two different supervised learning classification approaches of feature engineering and data preprocessing

[...] Read more.

The overall purpose of this paper is to demonstrate how data preprocessing, training size variation, and subsampling can dynamically change the performance metrics of imbalanced text classification. The methodology encompasses using two different supervised learning classification approaches of feature engineering and data preprocessing with the use of five machine learning classifiers, five imbalanced sampling techniques, specified intervals of training and subsampling sizes, statistical analysis using R and tidyverse on a dataset of 1000 portable document format files divided into five labels from the World Health Organization Coronavirus Research Downloadable Articles of COVID-19 papers and PubMed Central databases of non-COVID-19 papers for binary classification that affects the performance metrics of precision, recall, receiver operating characteristic area under the curve, and accuracy. One approach that involves labeling rows of sentences based on regular expressions significantly improved the performance of imbalanced sampling techniques verified by performing statistical analysis using a t-test documenting performance metrics of iterations versus another approach that automatically labels the sentences based on how the documents are organized into positive and negative classes. The study demonstrates the effectiveness of ML classifiers and sampling techniques in text classification datasets, with different performance levels and class imbalance issues observed in manual and automatic methods of data processing.

Full article

(This article belongs to the Topic Bioinformatics and Intelligent Information Processing)

►▼

Show Figures

Figure 1

Open AccessArticle

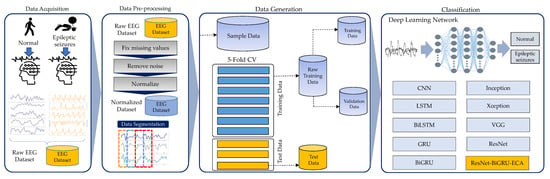

Effective Detection of Epileptic Seizures through EEG Signals Using Deep Learning Approaches

Mach. Learn. Knowl. Extr. 2023, 5(4), 1937-1952; https://doi.org/10.3390/make5040094 - 11 Dec 2023

Abstract

Epileptic seizures are a prevalent neurological condition that impacts a considerable portion of the global population. Timely and precise identification can result in as many as 70% of individuals achieving freedom from seizures. To achieve this, there is a pressing need for smart,

[...] Read more.

Epileptic seizures are a prevalent neurological condition that impacts a considerable portion of the global population. Timely and precise identification can result in as many as 70% of individuals achieving freedom from seizures. To achieve this, there is a pressing need for smart, automated systems to assist medical professionals in identifying neurological disorders correctly. Previous efforts have utilized raw electroencephalography (EEG) data and machine learning techniques to classify behaviors in patients with epilepsy. However, these studies required expertise in clinical domains like radiology and clinical procedures for feature extraction. Traditional machine learning for classification relied on manual feature engineering, limiting performance. Deep learning excels at automated feature learning directly from raw data sans human effort. For example, deep neural networks now show promise in analyzing raw EEG data to detect seizures, eliminating intensive clinical or engineering needs. Though still emerging, initial studies demonstrate practical applications across medical domains. In this work, we introduce a novel deep residual model called ResNet-BiGRU-ECA, analyzing brain activity through EEG data to accurately identify epileptic seizures. To evaluate our proposed deep learning model’s efficacy, we used a publicly available benchmark dataset on epilepsy. The results of our experiments demonstrated that our suggested model surpassed both the basic model and cutting-edge deep learning models, achieving an outstanding accuracy rate of 0.998 and the top F1-score of 0.998.

Full article

(This article belongs to the Special Issue Sustainable Applications for Machine Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

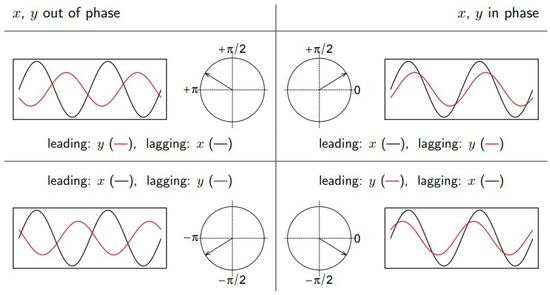

Social Intelligence Mining: Unlocking Insights from X

Mach. Learn. Knowl. Extr. 2023, 5(4), 1921-1936; https://doi.org/10.3390/make5040093 - 11 Dec 2023

Abstract

Social trend mining, situated at the confluence of data science and social research, provides a novel lens through which to examine societal dynamics and emerging trends. This paper explores the intricate landscape of social trend mining, with a specific emphasis on discerning leading

[...] Read more.

Social trend mining, situated at the confluence of data science and social research, provides a novel lens through which to examine societal dynamics and emerging trends. This paper explores the intricate landscape of social trend mining, with a specific emphasis on discerning leading and lagging trends. Within this context, our study employs social trend mining techniques to scrutinize X (formerly Twitter) data pertaining to risk management, earthquakes, and disasters. A comprehensive comprehension of how individuals perceive the significance of these pivotal facets within disaster risk management is essential for shaping policies that garner public acceptance. This paper sheds light on the intricacies of public sentiment and provides valuable insights for policymakers and researchers alike.

Full article

(This article belongs to the Section Data)

►▼

Show Figures

Figure 1

Open AccessArticle

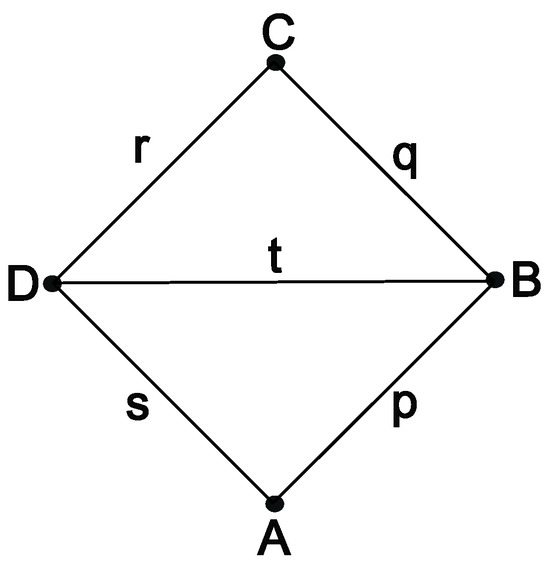

Generalized Permutants and Graph GENEOs

Mach. Learn. Knowl. Extr. 2023, 5(4), 1905-1920; https://doi.org/10.3390/make5040092 - 09 Dec 2023

Abstract

This paper is part of a line of research devoted to developing a compositional and geometric theory of Group Equivariant Non-Expansive Operators (GENEOs) for Geometric Deep Learning. It has two objectives. The first objective is to generalize the notions of permutants and permutant

[...] Read more.

This paper is part of a line of research devoted to developing a compositional and geometric theory of Group Equivariant Non-Expansive Operators (GENEOs) for Geometric Deep Learning. It has two objectives. The first objective is to generalize the notions of permutants and permutant measures, originally defined for the identity of a single “perception pair”, to a map between two such pairs. The second and main objective is to extend the application domain of the whole theory, which arose in the set-theoretical and topological environments, to graphs. This is performed using classical methods of mathematical definitions and arguments. The theoretical outcome is that, both in the case of vertex-weighted and edge-weighted graphs, a coherent theory is developed. Several simple examples show what may be hoped from GENEOs and permutants in graph theory and how they can be built. Rather than being a competitor to other methods in Geometric Deep Learning, this theory is proposed as an approach that can be integrated with such methods.

Full article

(This article belongs to the Section Topology)

►▼

Show Figures

Figure 1

Open AccessArticle

Solving Partially Observable 3D-Visual Tasks with Visual Radial Basis Function Network and Proximal Policy Optimization

Mach. Learn. Knowl. Extr. 2023, 5(4), 1888-1904; https://doi.org/10.3390/make5040091 - 01 Dec 2023

Abstract

Visual Reinforcement Learning (RL) has been largely investigated in recent decades. Existing approaches are often composed of multiple networks requiring massive computational power to solve partially observable tasks from high-dimensional data such as images. Using State Representation Learning (SRL)

[...] Read more.

Visual Reinforcement Learning (RL) has been largely investigated in recent decades. Existing approaches are often composed of multiple networks requiring massive computational power to solve partially observable tasks from high-dimensional data such as images. Using State Representation Learning (SRL) has been shown to improve the performance of visual RL by reducing the high-dimensional data into compact representation, but still often relies on deep networks and on the environment. In contrast, we propose a lighter, more generic method to extract sparse and localized features from raw images without training. We achieve this using a Visual Radial Basis Function Network (VRBFN), which offers significant practical advantages, including efficient and accurate training with minimal complexity due to its two linear layers. For real-world applications, its scalability and resilience to noise are essential, as real sensors are subject to change and noise. Unlike CNNs, which may require extensive retraining, this network might only need minor fine-tuning. We test the efficiency of the VRBFN representation to solve different RL tasks using Proximal Policy Optimization (PPO). We present a large study and comparison of our extraction methods with five classical visual RL and SRL approaches on five different first-person partially observable scenarios. We show that this approach presents appealing features such as sparsity and robustness to noise and that the obtained results when training RL agents are better than other tested methods on four of the five proposed scenarios.

Full article

(This article belongs to the Section Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

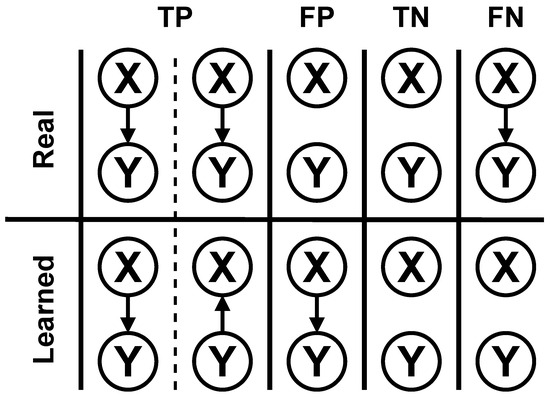

Bayesian Network Structural Learning Using Adaptive Genetic Algorithm with Varying Population Size

Mach. Learn. Knowl. Extr. 2023, 5(4), 1877-1887; https://doi.org/10.3390/make5040090 - 01 Dec 2023

Abstract

A Bayesian network (BN) is a probabilistic graphical model that can model complex and nonlinear relationships. Its structural learning from data is an NP-hard problem because of its search-space size. One method to perform structural learning is a search and score approach, which

[...] Read more.

A Bayesian network (BN) is a probabilistic graphical model that can model complex and nonlinear relationships. Its structural learning from data is an NP-hard problem because of its search-space size. One method to perform structural learning is a search and score approach, which uses a search algorithm and structural score. A study comparing 15 algorithms showed that hill climbing (HC) and tabu search (TABU) performed the best overall on the tests. This work performs a deeper analysis of the application of the adaptive genetic algorithm with varying population size (AGAVaPS) on the BN structural learning problem, which a preliminary test showed that it had the potential to perform well on. AGAVaPS is a genetic algorithm that uses the concept of life, where each solution is in the population for a number of iterations. Each individual also has its own mutation rate, and there is a small probability of undergoing mutation twice. Parameter analysis of AGAVaPS in BN structural leaning was performed. Also, AGAVaPS was compared to HC and TABU for six literature datasets considering F1 score, structural Hamming distance (SHD), balanced scoring function (BSF), Bayesian information criterion (BIC), and execution time. HC and TABU performed basically the same for all the tests made. AGAVaPS performed better than the other algorithms for F1 score, SHD, and BIC, showing that it can perform well and is a good choice for BN structural learning.

Full article

(This article belongs to the Section Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

Analysing Semi-Supervised ConvNet Model Performance with Computation Processes

by

and

Mach. Learn. Knowl. Extr. 2023, 5(4), 1848-1876; https://doi.org/10.3390/make5040089 - 29 Nov 2023

Abstract

The rapid development of semi-supervised machine learning (SSML) algorithms has shown enhanced versatility, but pinpointing the primary influencing factors remains a challenge. Historically, deep neural networks (DNNs) have been used to underpin these algorithms, resulting in improved classification precision. This study aims to

[...] Read more.

The rapid development of semi-supervised machine learning (SSML) algorithms has shown enhanced versatility, but pinpointing the primary influencing factors remains a challenge. Historically, deep neural networks (DNNs) have been used to underpin these algorithms, resulting in improved classification precision. This study aims to delve into the performance determinants of SSML models by employing post-hoc explainable artificial intelligence (XAI) methods. By analyzing the components of well-established SSML algorithms and comparing them to newer counterparts, this work redefines semi-supervised computation processes for both data preprocessing and classification. Integrating different types of DNNs, we evaluated the effects of parameter adjustments during training across varied labeled and unlabeled data proportions. Our analysis of 45 experiments showed a notable 8% drop in training loss and a 6.75% enhancement in learning precision when using the Shake-Shake26 classifier with the RemixMatch SSML algorithm. Additionally, our findings suggest a strong positive relationship between the amount of labeled data and training duration, indicating that more labeled data leads to extended training periods, which further influences parameter adjustments in learning processes.

Full article

(This article belongs to the Section Learning)

►▼

Show Figures

Figure 1

Open AccessArticle

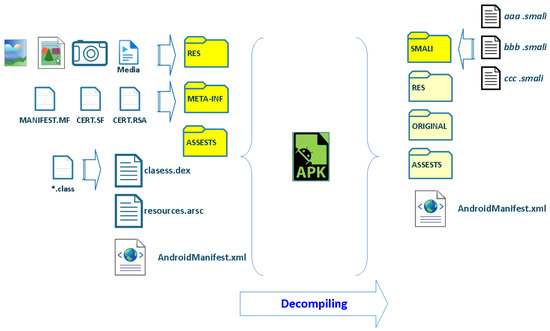

Android Malware Classification Based on Fuzzy Hashing Visualization

Mach. Learn. Knowl. Extr. 2023, 5(4), 1826-1847; https://doi.org/10.3390/make5040088 - 28 Nov 2023

Abstract

The proliferation of Android-based devices has brought about an unprecedented surge in mobile application usage, making the Android ecosystem a prime target for cybercriminals. In this paper, a new method for Android malware classification is proposed. The method implements a convolutional neural network

[...] Read more.

The proliferation of Android-based devices has brought about an unprecedented surge in mobile application usage, making the Android ecosystem a prime target for cybercriminals. In this paper, a new method for Android malware classification is proposed. The method implements a convolutional neural network for malware classification using images. The research presents a novel approach to transforming the Android Application Package (APK) into a grayscale image. The image creation utilizes natural language processing techniques for text cleaning, extraction, and fuzzy hashing to represent the decompiled code from the APK in a set of hashes after preprocessing, where the image is composed of n fuzzy hashes that represent an APK. The method was tested on an Android malware dataset with 15,493 samples of five malware types. The proposed method showed an increase in accuracy compared to others in the literature, achieving up to 98.24% in the classification task.

Full article

(This article belongs to the Topic Applied Computer Vision and Pattern Recognition: 2nd Volume)

►▼

Show Figures

Figure 1

Open AccessArticle

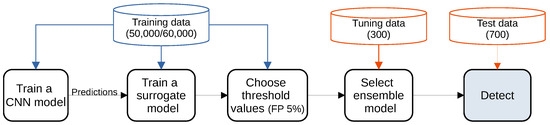

Detecting Adversarial Examples Using Surrogate Models

Mach. Learn. Knowl. Extr. 2023, 5(4), 1796-1825; https://doi.org/10.3390/make5040087 - 27 Nov 2023

Abstract

Deep Learning has enabled significant progress towards more accurate predictions and is increasingly integrated into our everyday lives in real-world applications; this is true especially for Convolutional Neural Networks (CNNs) in the field of image analysis. Nevertheless, it has been shown that Deep

[...] Read more.

Deep Learning has enabled significant progress towards more accurate predictions and is increasingly integrated into our everyday lives in real-world applications; this is true especially for Convolutional Neural Networks (CNNs) in the field of image analysis. Nevertheless, it has been shown that Deep Learning is vulnerable against well-crafted, small perturbations to the input, i.e., adversarial examples. Defending against such attacks is therefore crucial to ensure the proper functioning of these models—especially when autonomous decisions are taken in safety-critical applications, such as autonomous vehicles. In this work, shallow machine learning models, such as Logistic Regression and Support Vector Machine, are utilised as surrogates of a CNN based on the assumption that they would be differently affected by the minute modifications crafted for CNNs. We develop three detection strategies for adversarial examples by analysing differences in the prediction of the surrogate and the CNN model: namely, deviation in (i) the prediction, (ii) the distance of the predictions, and (iii) the confidence of the predictions. We consider three different feature spaces: raw images, extracted features, and the activations of the CNN model. Our evaluation shows that our methods achieve state-of-the-art performance compared to other approaches, such as Feature Squeezing, MagNet, PixelDefend, and Subset Scanning, on the MNIST, Fashion-MNIST, and CIFAR-10 datasets while being robust in the sense that they do not entirely fail against selected single attacks. Further, we evaluate our defence against an adaptive attacker in a grey-box setting.

Full article

(This article belongs to the Collection Extravaganza Feature Papers on Hot Topics in Machine Learning and Knowledge Extraction)

►▼

Show Figures

Figure 1

Open AccessArticle

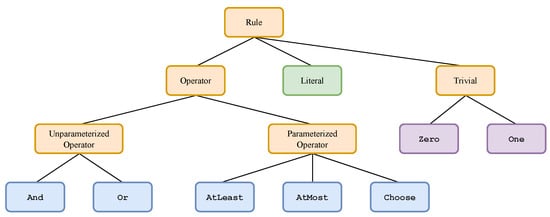

Explainable Artificial Intelligence Using Expressive Boolean Formulas

by

, , , , , , , and

Mach. Learn. Knowl. Extr. 2023, 5(4), 1760-1795; https://doi.org/10.3390/make5040086 - 24 Nov 2023

Abstract

We propose and implement an interpretable machine learning classification model for Explainable AI (XAI) based on expressive Boolean formulas. Potential applications include credit scoring and diagnosis of medical conditions. The Boolean formula defines a rule with tunable complexity (or interpretability) according to which

[...] Read more.

We propose and implement an interpretable machine learning classification model for Explainable AI (XAI) based on expressive Boolean formulas. Potential applications include credit scoring and diagnosis of medical conditions. The Boolean formula defines a rule with tunable complexity (or interpretability) according to which input data are classified. Such a formula can include any operator that can be applied to one or more Boolean variables, thus providing higher expressivity compared to more rigid rule- and tree-based approaches. The classifier is trained using native local optimization techniques, efficiently searching the space of feasible formulas. Shallow rules can be determined by fast Integer Linear Programming (ILP) or Quadratic Unconstrained Binary Optimization (QUBO) solvers, potentially powered by special-purpose hardware or quantum devices. We combine the expressivity and efficiency of the native local optimizer with the fast operation of these devices by executing non-local moves that optimize over the subtrees of the full Boolean formula. We provide extensive numerical benchmarking results featuring several baselines on well-known public datasets. Based on the results, we find that the native local rule classifier is generally competitive with the other classifiers. The addition of non-local moves achieves similar results with fewer iterations. Therefore, using specialized or quantum hardware could lead to a significant speedup through the rapid proposal of non-local moves.

Full article

(This article belongs to the Special Issue Advances in Explainable Artificial Intelligence (XAI): 2nd Edition)

►▼

Show Figures

Figure 1

Open AccessArticle

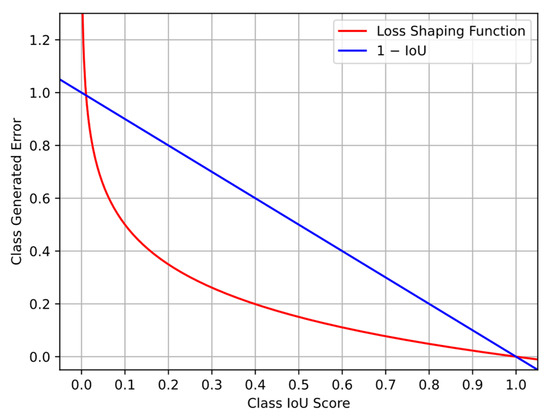

FCIoU: A Targeted Approach for Improving Minority Class Detection in Semantic Segmentation Systems

Mach. Learn. Knowl. Extr. 2023, 5(4), 1746-1759; https://doi.org/10.3390/make5040085 - 23 Nov 2023

Abstract

In this paper, we present a comparative study of modern semantic segmentation loss functions and their resultant impact when applied with state-of-the-art off-road datasets. Class imbalance, inherent in these datasets, presents a significant challenge to off-road terrain semantic segmentation systems. With numerous environment

[...] Read more.

In this paper, we present a comparative study of modern semantic segmentation loss functions and their resultant impact when applied with state-of-the-art off-road datasets. Class imbalance, inherent in these datasets, presents a significant challenge to off-road terrain semantic segmentation systems. With numerous environment classes being extremely sparse and underrepresented, model training becomes inefficient and struggles to comprehend the infrequent minority classes. As a solution to this problem, loss functions have been configured to take class imbalance into account and counteract this issue. To this end, we present a novel loss function, Focal Class-based Intersection over Union (FCIoU), which directly targets performance imbalance through the optimization of class-based Intersection over Union (IoU). The new loss function results in a general increase in class-based performance when compared to state-of-the-art targeted loss functions.

Full article

(This article belongs to the Section Learning)

►▼

Show Figures

Figure 1

Highly Accessed Articles

Latest Books

E-Mail Alert

News

Topics

Topic in

Entropy, Future Internet, Algorithms, Computation, MAKE, MTI

Interactive Artificial Intelligence and Man-Machine Communication

Topic Editors: Christos Troussas, Cleo Sgouropoulou, Akrivi Krouska, Ioannis Voyiatzis, Athanasios VoulodimosDeadline: 20 February 2024

Topic in

Applied Sciences, MAKE, Mathematics, Remote Sensing

Adversarial Machine Learning: Theories and Applications

Topic Editors: Feiran Huang, Shuyuan Lin, Xiaoming Zhang, Yang LuDeadline: 31 March 2024

Topic in

Applied Sciences, Sensors, J. Imaging, MAKE

Applications in Image Analysis and Pattern Recognition

Topic Editors: Bin Fan, Wenqi RenDeadline: 31 August 2024

Topic in

Applied Sciences, Electronics, J. Imaging, MAKE, Remote Sensing

Computational Intelligence in Remote Sensing: 2nd Edition

Topic Editors: Yue Wu, Kai Qin, Maoguo Gong, Qiguang MiaoDeadline: 31 December 2024

Conferences

Special Issues

Special Issue in

MAKE

Advances in Explainable Artificial Intelligence (XAI): 2nd Edition

Guest Editor: Luca LongoDeadline: 29 February 2024

Special Issue in

MAKE

Transparency of Deep Neural Networks and Complex Tree Ensembles

Guest Editor: Yoichi HayashiDeadline: 31 March 2024

Special Issue in

MAKE

Sustainable Applications for Machine Learning

Guest Editors: Danial Javaheri, Hassan Chizari, Amir Masoud RahmaniDeadline: 2 July 2024

Special Issue in

MAKE

Large Language Models: Methods and Applications

Guest Editors: Ahmad Taher Azar, Karin Verspoor, Irena SpasićDeadline: 31 July 2024

Topical Collections

Topical Collection in

MAKE

Extravaganza Feature Papers on Hot Topics in Machine Learning and Knowledge Extraction

Collection Editor: Andreas Holzinger